Rachel Schutt (the author of the Taxonomy of Confusion) has a blog! for the course she’s teaching at Columbia, “Introduction to Data Science.” It sounds like a great course—I wish I could take it!

Her latest post is “On Inspiring Students and Being Human”:

Of course one hopes as a teacher that one will inspire students . . . But what I actually mean by “inspiring students” is that you are inspiring me; you are students who inspire: “inspiring students”. This is one of the happy unintended consequences of this course so far for me.

She then gives examples of some of the students in her class and some of their interesting ideas:

Phillip is a PhD student in the sociology department . . . He’s in the process of developing his thesis topic around some of the themes we’ve been discussing in this class, such as the emerging data science community.

Arvi works at the College Board and is a part time student . . . He analyzes user-level data of students who have signed up for (and taken) the SATs and has lots of interesting data around where those students hope to go to college; and longitudinal data sets that allow him and his colleagues to examine trends . . .

Adam, Christina and Eurry (respectively, (1)sociology PhD student, (2)data scientist at Nielsen, and (3)”aspring data visualizer” (better term?) from the QMSS program) have taken on the challenge of polling the students and then developing an algorithm to automatically find optimal data science teams and a corresponding visualization.

Matt is a history of science professor who wrote the Curse of Dimensionality post a week ago, and is starting to think about (or revisit) how exploratory text classification could be used in his research.

Jed works as a data analyst at Case Commons, a nonprofit that builds web apps and and databases for state-wide foster care agencies. . . . he read this paper on using Naive Bayes to classify suicide notes, and now has some early ideas of ways he might apply this approach in his own work.

Maryanne is the Executive Director for the Center for Innovation Through Data Intelligence in Mayor Bloomberg’s office. Her office deals with data about the juvenile justice system, homelessness and poverty and she too is thinking about how analyzing data sets could be used to prioritize social worker interventions.

Then let’s not forget the Biomedical Informatics (or variation of that) students/post-doc, Hojjat, Albert and Heather; or Kaushik, the student from operations research interested in journalism; or Yegor, the business school student who has an interest in urban planning and architecture . . .

The comments on the blog from various students are also starting to become interesting. Also let me add Jared’s (our lab instructor) study of his own text messages, after he broke up with his girlfriend, which he just told me about tonight.

This all reminds me of a comment Seth made once, many years ago, that the usual goal (even if not explicitly stated) of a class is for the students to become replicas of the instructor, whereas he (Seth) liked to teach in such a way that each student could bring in his or her special knowledge, interests, and abilities. I don’t know how good a teacher Seth actually is—I have lots of innovative teaching ideas too, but I’m not such a great teacher, in fact in a large part it’s my crappiness as a teacher that inspires me to come up with new teaching ideas (the entire book Teaching Statistics: A Bag of Tricks arose out of my difficulties in getting students actively engaged in class)—but I think his idea is interesting. In my own teaching, sadly, I pretty much have the goal of turning the students into mini-me’s. I mean, sure, I don’t want them all to become me, I want them to develop their own talents, but implicitly I’m acting as if the best way to do so is to first become as much like me as possible.

I think Rachel’s shout-outs above are great, not just because it’s a nice thing to do, but because the act of writing these details about the students helps to bring these project to life, as well as to inspire others.

Rachel also has some thoughts about statistics education:

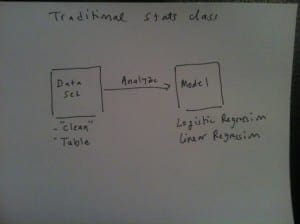

Traditional Statistics Pedagogy

First, allow me to describe the way traditional statistics classes/textbooks present data analysis. A standard homework problem would be: one is presented with a clean data set, and told to run a regression with y=weight and x=height, for example. I look unfavorably upon this because it takes the creativity and life out of everything, and doesn’t resemble in the least what it’s like to actually be a researcher or statistician in the real world. As mentioned previously, in the “real world” (no offense, classrooms aren’t the real world), no one is going to hand you a nice clean data set (and if they did, I’d be skeptical! Also where’d it come from?), and no one is going to tell you what method to use. (Why are we even running this regression in the first place? What questions are we even trying to answer?). The homework problem might then have some questions about interpreting or understanding the model: “interpret the coefficients” and let’s face it, most people think these are blow-off questions, don’t take them all that seriously, and there are no consequences if they mis-interpret on a homework problem, so they may think about it for . . . a minute, and then write some plausible interpretation.

Oof! Those are the kind of homework assignments that I write. Rachel continues with a visual:

She continues with her preferred model:

followed by:

This all makes sense to me, and it looks a lot like a bunch of notes I took back in 1997 or so, after teaching applied statistics to the Columbia graduate students. I gave them open-ended homework assignments, then in class spent some time giving them the background they needed to know to attack the problems, and spent a lot of time going over the homeworks they had just turned in. The students liked the class, and I had thoughts of trying to write up a general approach to data analysis, trying to formalize what I did and what I taught. After a few years of this, though, I became dissatisfied because I felt that, although the class was a good learning experience for the students, they didn’t actually end up with many useful new skills. And, over the years, I’ve moved to a more structured, textbook-based style of teaching. This semester, in fact, I’m pretty much just going through Bayesian Data Analysis section by section. We do have some open-ended homework assignments on applied statistics—I think this is important—but maybe Rachel is right that the students aren’t getting a clear message on where to go with these.

Can you put up a link to the “Taxonomy of Confusion”? I can’t find it with simple web searches.

http://statmodeling.stat.columbia.edu/2008/09/taxonomy_of_con/

Andrew: “good learning experience for the students, they didn’t actually end up with many useful new skills” that does seem like a contradiction – by useful you mean something specific?

Looks really neat – sort of an extended and enhanced version of the grad Biostats lab I got as a student.

Would seem like it’s going to be a lot of work to grade. (I was given a B- in the lab course when I took it, because I did the projects on my own and presented/defended them myself in the course. The other students apparently did their projects with input/assistance from faculty who attested to the mark they deserved. That is one way to spread the work load.)

Noticed this on her blog – “used by statisticians (AKA “quantitative analysts” or maybe now, “data scientists”) at Google”

Maybe it is time to change the name of our discipline…

My guess would be that “useful skill” would mean knowing a particular technique well enough. Like knowing OLS regression and knowing how to do it in R and how to set up data structures for R’s `lm` command and interpret all of its output.

What I would mean by that phrase, if I were a professor, might be that students are learning appropriate things along the way to mastering a topic, but they don’t have the specific pre-req’s for the next course they are going to take.

As a negative for changing to “data science,” I relay this thought:

The President of a nearby school is well-known in computer science. In talk a while ago, he wryly commented that disciplines that included “science” in their names … probably weren’t. :-)

That’s a pretty good heuristic. I’ve heard Brian Harvey (Berkeley EECS) argue that computer science is both not about computers and also not a science. Some might argue about whether computer science is about computers or not, but it’s definitely not a science.

I appreciate the joke, but I do think that computer science is more than the joke makes it out to be. I need look no farther than the Matlab or Fortran “code” that I’ve seen from those without some kind of programming focus. Sorry, just a soapbox issue from a computer science guy who has dived deeply into the statistical pool.

No, the other way around: it is certainly true that Computer Science is not about computers (in the same way that Astronomy is not about telescopes), but it is definitely science. Unfortunately, much/most of what is _taught_ in Computer Science departments, at least to undergraduates, is Software Engineering (which I agree is not science). This is because engineering skills are more sought after in the job market than science skills.

Yes! Think about Religious Science:

http://en.wikipedia.org/wiki/Religious_Science

I don’t think it’s sad that you want to turn your students into “mini-me’s”. I’ve thought a lot about these issues and it seems to me that the ultimate teaching situation is an apprenticeship. That’s the way skills were historically learned, it’s what we do at the beginning of life (we’re apprenticed to our parents, essentially), and it’s what happens at the “highest” levels of education: a PhD, where you’re essentially apprenticed to your advisor. It’s in the middle of our educations where we have to abandon the apprenticeship idea and go with something more modular for expediency sake.

If we could creatively overcome the limitations of apprenticeship in the middle, I really believe that’d be the way to go. And to a great extent, apprenticeship is about making mini-me’s. (Though with something of an open mind on the master craftsman’s part, to allow growth beyond his or her own biases and limitations.)

I think this tension between the idealized (mentor/apprenticeship) education and the reality of modern college campuses is what drives professors from an early desire to really teach a subject to a later compromise that they need to teach certain skills (i.e. the things that the next lego-block class will require). If statistics, for instance, was taught as scientific investigation by a couple of master statisticians/scientists who shepherded a cohort of students through their entire degree, the teaching would be much more organic and would ultimately better prepare students, I think.

In my experience, few professors give homework assignments that include a good mix of exercises (where it’s clear at a glance what to do and the point is just to gain some proficiency) and problems (where it’s not obvious what to do and the point is to acquire the kinds of thinking strategies you need to deal with the complexities of real-world problems). It’s important to give students experience in solving problems (and not just on exams!), but exercises have their place too: it’s very hard to go straight from reading/lecture to solving problems without doing some exercises first.