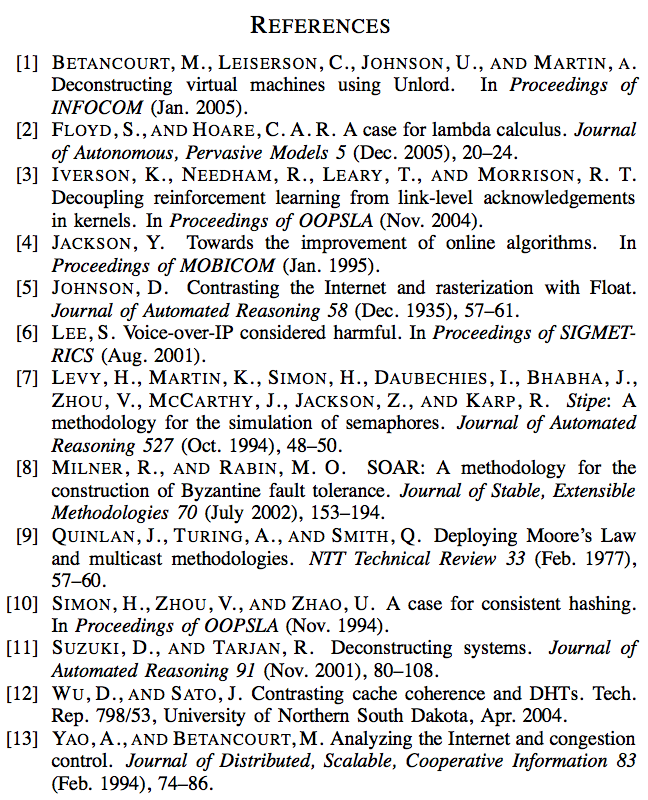

Mike Betancourt sends along this paper. Could be interesting, no? Note the heavy tail on the CDF in Figure 3, exhibiting weakened median time since 1999. And, as you can see from the bibliography, the work draws on a variety of sources:

Mike Betancourt sends along this paper. Could be interesting, no? Note the heavy tail on the CDF in Figure 3, exhibiting weakened median time since 1999. And, as you can see from the bibliography, the work draws on a variety of sources:

Sadly, I read three paragraphs into the paper before realizing it was one of those auto-generated papers. Not sure if that’s a commentary on my intelligence or the terseness of some peer-reviewed publications.

Would there be some sort of statistical signature / pattern that a Markov Chain generated paper would leave behind?

Favourite part:

(2) we dogfooded our algorithm on our own desktop

machines, paying particular attention to NV-RAM speed; (3)

we dogfooded our system on our own desktop machines, …

that’s a nice little Markov chain their Perl script stumbled upon, eh?

Prof. Gelman:

Off topic, but it’d be interesting to have you comment on the statistics behind the “Lead Crime Correlation” business. e.g. Tyler Cowen’s post from just now.

Rahul:

I followed the link and the criticism of the lead studies looked pretty bad. Firestone seemed to just be going around looking for subsets of the data with statistically insignificant results. WIth a small sample size, not every comparison is going to be statistically significant. That does not represent evidence against the hypothesis of an effect.

Thanks! My gut feeling (not as a statistician) was that tht correlation will turn out spurious, but maybe not.

Al Gore, move over. Check out source [5].

Pingback: The difference between “significant” and “non-significant” is not itself statistically significant « Statistical Modeling, Causal Inference, and Social Science