I was going to post yet one more discussion of our discussion of the discussion of the discussion of some paper that I don’t really care about, but then I was like, aaaahh, what’s the point?

So instead here’s a pointer to the first paper I ever published. It’s the very last one on this list.

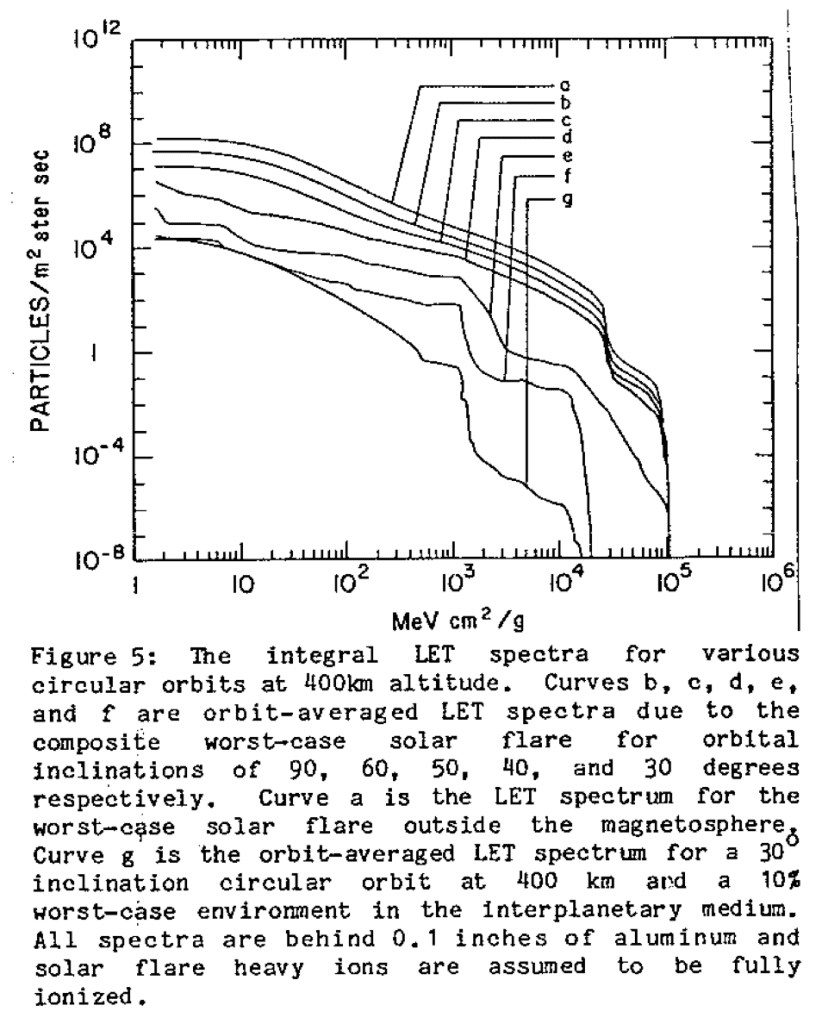

The backstory is here, and here’s a poorly labeled graph for you to laugh at:

Enjoy.

Back in the day how did you make this graph? What software? Or by hand?

Ah yes, the technological archeology of graphs.

I’m pretty sure I did it in Fortran, since I wrote the program to do all the calculations in Fortran.

I used to program in Fortran doing neutrino physics. The plot reminds me of plots created with Physics Analysis Workstation, although I’m not sure it was around when Andrew would have made this…

To write this in raw Fortran must have been mighty nasty.

A common set-up (as I recall as a one-time Fortran programmer) was to call up subroutines for each subtask, plotting each curve, putting ticks on the axes, putting labels (titles) on the axes, etc. The code need not have been very much complicated in total than is required of many a current statistical or mathematical environment.

Also, the detailed syntax for this kind of graph is the just the kind of thing needed repeatedly, so details tended to stick in the mind or at least be transferred from program to program.

In some cases, there have steps backwards, e.g. default treatment of logarithmic scales is often awkward in some current programs.

What was the output device? A Calcomp plotter?

Following the links you mention your trip to the ’88 Bays/Maxent conference. About 6 months ago I go picked up that conference proceedings for few dollars and it had a picture of you (Andrew) sitting a few seats over from Jaynes. You both were flashing the same gang signs. Ok, I made that last part up.

I posted that picture here:

http://www.entsophy.net/blog/?p=249

both Gelman and Jaynes are labeled.

Andrew,

So now with the whole week-long blogging theme, how about an end of week “what we’ve learned” wrap-up? Maybe you could identify two or three good points that came up in your writing and in our discussion that sort of frame where we started, what we learned, and where there remains disagreement. I think that would be a cool way to wrap everything up and make each week stand sort of on its own (while also allowing each post to stand on its own). M-F various posts on a topic, Saturday recap, Sunday “on deck” for next week.

Of course, its easy for me to suggest more work for you, but you could also just make the Saturday post an open comment forum on the week’s topic. But with that in mind, I’ll give a (probably less funny and too long) version of what you might be able to do, as one way you could do it (or as fodder for you to chew on while coming up with a better way, if you like the idea):

This week:

Was concluded that a trial of 1 week blogging time frame was worth a go. The topic: The post-peer-review peer-review process and the relationship between quality of research, the academic demand for publications, and the resulting perverse incentives for researchers.

Andrew started out discussing the appropriate time-frame for posting and blog-versation. A one-week arc was considered as a first-pass guess for an optimal time horizon. The topic was introduced as a question we had been grappling with for some time: what are some good ways we can improve the scientific discourse through various sorts of post-publication peer-review. Within that framework, we wondered how peer review and academic publishing create an environment in which a) poor research winds up in top journals; b) researchers are incentivized to produce continually more research at the cost of both quality and real-world relevance; c) plagiarism is both incentived and, despite being formally condemned, informally tolerated; and d) standards for publishable work become a checklist for researchers to follow the “form” of a rigorous statistical investigation, without understanding any of the content of their statistical methods and assumptions. All from the perspective of our role in that game – as consumers of research, as commenters, as educators, and, mostly, as players in the game.

On Monday it was agreed that a 1-week time horizon was a good start. There was some concern that some topics weren’t worth a week, and some people liked randomly coming across interesting things. There was also discussion of “optimal” publishing quantities over a lifetime (20 papers was suggested, deemed too few).

On Tuesday all hell broke loose as we discussed our own position in the “peer review” process – that of jerks who comment on people’s articles on the internet. We even imported some extra hell to break loose from another blog. In the end, civility (mostly) won, and we ended up showing that academics are human beings with complex personalities who are mostly just trying to do good. We also decided that it was OK to comment on papers if you had actually read them.

On Wednesday we went from well-intentioned researchers who published imperfect work (most of us) to full on losers who plagiarize. We discussed the perverse incentives to publish, how that leads to plagiarism, exactly what constitutes “egregious” plagiarism as compared to sloppy research, and, this being a blog on the internet, there were some whiffs of racism. We discussed a bit what constitutes plagiarism and where it fits in the hierarchy of academic and non-academic crimes.

On Thursday, we came back to questioning ourselves: we, who comment on other people’s work and condemn them for their lack of integrity – is that worth our time? Unsurprisingly, we generally thought that yes, it was worth our time. In part, it can help fix the peer review process, it can teach others that a “published article’ isn’t necessarily True, and just a little bit we thought that Andrew’s correspondent was a bit melodramatic – come on man, just because one guy sucks and has so far mostly gotten away with it doesn’t mean Everything is Terrible!. My conclusion from this: there are jerks in every profession who cut corners, and sometimes that pays off, and sometimes it doesn’t. Academia is no exception to that general rule. Public shaming may help re-align the perverse incentives to plagiarize by raising the costs of getting caught.

Friday we re-examined the “should we criticize people” question in relation to journalism and the News-ification of academic results. This was considered in the context of a “selection effect” on publicizing research – we can’t let only the people who love some research speak for it in the press, because there is then no pushback. On the other hand – what’s the value of putting out news articles on findings that say “So and so claim to have found something but probably didn’t”.

So what have we learned from all of this? I think we pushed forward on the pros/cons of various post-publication review processes (blog comments, comments at a journal-run cite, teaching and assigning replications in graduate courses, etc). And I think the back-forth with the orgtheory crowd was sort of amazing. What I got from that was a reminder that academics are human beings who are complicated and flawed and don’t always live up to either our expectations for them, or their own expectations for themselves. And these people have both a responsibility to address problems in their work in various forms, but not a responsibility to always immediately and perfectly respond to (even well-intentioned) commenters of various quality who demand their attention. And we don’t have a very good way to let that happen yet – the costs of retraction are too big, the journal world frowns on comments (even self-comments), internet commenters are jerks with high variance quality of their insights, and the result is both an increase in “noise” in the literature, and people’s feelings getting hurt really quickly, on all sides.

Let me try the Twitter version:

Monday:

#Gelman plans one wk rampage: Scientific literature sucks. New academic goal: 20 lifetime Tweets.

Tuesday :

Sociologist can’t take post peer review blogging. Tl;dr key to kynetic action.

Wednesday:

Plagiarist shamed over the internet. Google will do justice with every search. #Fun

Thursday:

Went through journal publications like crap through a goose. #Why I only read the Onion

Friday:

Harvard faculty gets promotion after research featured in People. Justin Bieber tweets about it. #impact fctr

Saturday:

#Catharsis.

Pingback: Science and Its Logic

Some cool stuff, man.

1. You should have gone to see the launch.

2. As an OK HS wrestler and a much worse college boxer, my money is on the wrestler. I think anyone who has wrestled in high school and learned how moves can help you (even if they never boxed) would echo this. That said, huge respect for boxers. Very tough sport. Nothing like getting in the ring and facing another man’s punches in terms of the gut check.

what is sad is that all of the problems in this graph that are related to printing technology – setting type –

Havn’t gone away: people still have super hard to follow legends.

iirc, it was M Planck who said that science doesn’t progress because people discard old ideas, rather, science progresses because old people die and young ones are not taught the errors of the past

physics! Even astrophysics!