Christian Bartels has a new paper, “Efficient generic integration algorithm to determine confidence intervals and p-values for hypothesis testing,” of which he writes:

The paper proposes to do an analysis of observed data which may be characterized as doing a judicious Bayesian analysis of the data resulting in the determination of exact frequentist p-values and confidence intervals. The judicious Bayesian analysis comprises the steps which one would or should do anyway:

Bayesian sampling of parameters given the data, e.g., using Stan

Simulation of new data given the sampled parameters

Comparison of the simulations with actually observed data

Using frequentist concepts to do the comparison of simulations with observations, one obtains frequentist p-values and confidence intervals. The frequentist p-values and confidence intervals are exact in the limit of investing sufficient computational time. This holds true independent of the probability model used, and independent of whether the observed data consists of a few or many observations. As such the algorithm is a valid if not superior alternative to bootstrap sampling of frequentis parameter estimates.

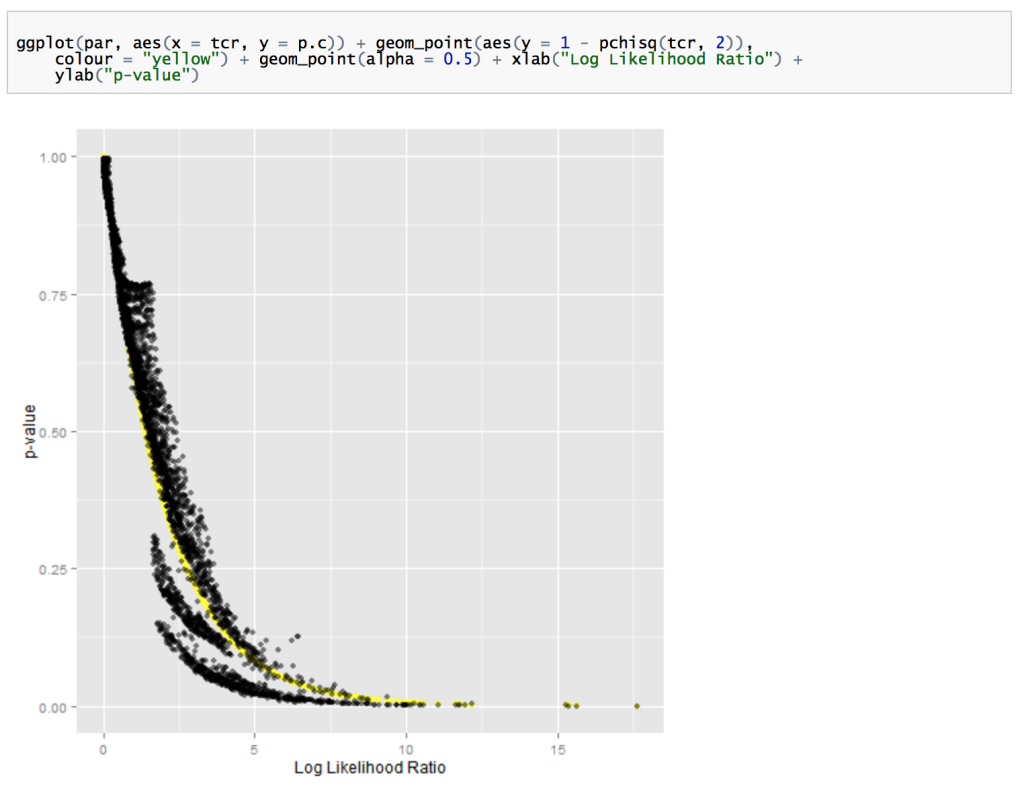

In the evaluation of the proposed algorithm, it has also been investigated in how far Bayesian estimates may be used as a frequentist test procedure. It has been shown that this is feasible, simple and results are comparable to those obtained with likelihood-ratio tests.

I haven’t looked at it in detail but, as described above, the approach reminds me of my 1996 paper with Meng and Stern in that we were originally thinking our largest audience would be users of classical statistics who would appreciate a stable and general approach for getting reasonable p-values in the presence of nuisance parameters. As it happened, the Bayesian-ness of our method was pretty much toxic to outsiders, but our paper did have some influence within Bayesian statistics (even as my own attitudes have changed, so that I still think model checking is important but I’m less likely to be using p-values, Bayesian or otherwise, in my applied work).

Bartels also includes some R and Stan code! See here for all the files.

synchronous timing. Don Rubin just gave a talk at the Census about using frequentist operating characteristics to evaluate bayesian procedures; championing calibrated bayes

I do recall posting once that everything is statistics is just the Radon–Nikodym derivative – or something as annoying.

It all seems to depend on the Harrison reference which claims valid p-values even with zero draws from the Monte-Carlo importance sampling (which I don’t doubt is true just _valid_ doesn’t mean much)

They also state “Finally, we recall the well-known fact that any valid family of p-values can be inverted to give valid confidence intervals” again valid does not mean much.

And “Only a single Monte Carlo sample is required. The idea of using importance sampling to construct confidence intervals from a single Monte Carlo sample was pointed out in GREEN,P.J.(1992). Discussion of the paper by Geyer and Thompson. J. R. Statist. Soc. B 54, 683–4.”

That is true, or at least I used it in my thesis, and Green is the correct attribution of it (not withstanding Stigler’s law).

So likely some good ideas, but not all convinced its all thoroughly worked out yet.

Maybe someone else well read it more carefully and post comments here ;-)

I do think it is important for importance sampling to be much more widely understood.

K? O’Rourke: Thanks for your short comments, though, it did help me to get a better idea what this is all about. It does sound interesting.

You might also want to read Conditional inference from confidence sets by George Casella http://projecteuclid.org/download/pdf_1/euclid.lnms/1215458835

(Valid 95% confidence coverage does not even guarantee you have extracted any information from the data.)

If I get this right, the claim that “no assumptions were made on the probability model” means that whatever the probability model is, it is possible to set this procedure up. However, in order to do that, the probability model needs to be assumed indeed, which is not the case for the nonparametric bootstrap. So suggesting that this pretty much does what bootstrap does but better is misleading. It is purely parametric.

As far as I see it, this is just a way to approximate lots of integrals required for getting confidence intervals from p-values in a certain way. It may be computationally efficient in doing this (probably this will depend on the prior, like everything that is Bayesian), but it is certainly not the next big hot thing for frequentists.

Actually, approximate lots of integrals required to get p_values that are guaranteed to be <= alpha at most alpha given the NULL?

Admittedly. there is something about the way the paper as currently written that suggests it might not be worth reading carefully.

Do you suggest I haven’t read this carefully? That may well be – but still on looking it up once more it doesn’t seems so wrong what I wrote… (you add “given the NULL” to what I wrote but it’s rather given any parameter as if it was the null… have a look at the paper and if you still believe I got this wrong, please explain it in a way that I can get;-)

Sorry, I think I read your sentence “approximate lots of integrals required for getting confidence intervals from p-values” too literally. It seemed to suggest the integration was done after the p_values were obtained.

And by NULL, I simply meant the parameter point being considered in a given calculation of how often data like this or more extreme would happen, given the parameter value.

Yes, the proposed algorithm is a numerical method to efficiently calculate lots of integrals. In general, this is all that is needed. The alternative to avoid the numerical integration is to make assumptions to get rid of the integrals. The fact that the method does not require assumptions to get rid of the integrals makes that the method is general.

Yes, the version, which I have implemented and tried to describe requires parametric models. For parametric models, the method is comparable to parametric or nonparametric bootstrap in that it is a general method to determine p-values and confidence intervals for any assumed model. It has the advantage that it works also in cases where precision of bootstrap is not clear, e.g., with sparse data or if your data has a complex hierarchical structure. There exist certainly situations, where the method is not applicable, and non-parametric bootstrap is advantageous.