. . . and Kaiser Fung is unhappy. In a post entitled, “Princeton’s loss of nerve,” Kaiser writes:

This development is highly regrettable, and a failure of leadership. (The new policy leaves it to individual departments to do whatever they want.)

The recent Alumni publication has two articles about this topic, one penned by President Eisgruber himself. I’m not impressed by the level of reasoning and logic displayed here.

Eisgruber’s piece is accompanied with a photo, captioned thus:

The goal of Princeton’s grading policy is to provide students with meaningful feedback on their performance in courses and independent work.

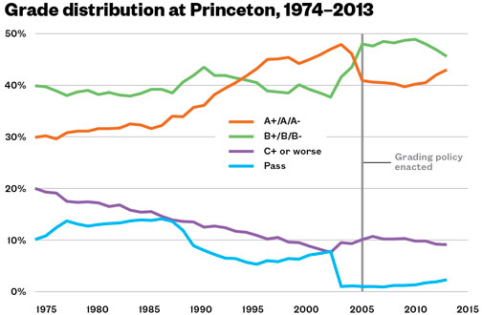

Such a goal [writes Kaiser] is far too vague to be practical. But let’s take this vague policy at face value. How “meaningful” is this feedback when 40% of grades handed out are As, and 80% of grades are either As or Bs? (At Stanford, Harvard, etc., the distributions are even more skewed.)

Here are some data:

My agreement with Kaiser

As a statistician, I agree with Kaiser that if you want grades to be informative, it makes sense to spread out the distribution. It’s an obvious point and it is indeed irritating when the president of Princeton denies or evades it.

I’d also say that “providing students with meaningful feedback” is one of the least important functions of course grades. What kind of “meaningful feedback” comes from a letter (or, for that matter, a number) assigned to an entire course? Comments on your homeworks, papers, and final exams: that can be meaningful feedback. Grades on individual assignments can be meaningful feedback, sure. But a grade for an entire course, not so much.

My impression is that the main functions of grades are to motivate students (equivalently, to deter them from not doing what it takes to get a high grade) and to provide information for future employers or graduate schools. For these functions, as well as for the direct feedback function, more information is better and it does not make sense to use a 5-point scale where 80% of the data are on two of the values.

One can look at this in various ways but the basic psychometric principle is clear. For more depth, go read statistician Val Johnson’s book on grade inflation.

My discomfort

OK, now for my disagreement or, maybe I should say, my discomfort with Kaiser’s argument.

Grad school grades.

In grad school we give almost all A’s. I’m teaching a course on statistical communication and graphics, and I love the students in my class, and I might well give all of them A’s.

In other grad classes I have lots of grading of homeworks and exams and I’ll give mostly A’s. I’ll give some B’s and sometimes students will complain about that, how it’s not fair that they have to compete with stat Ph.D. students, etc.

The point is, if I really believe Kaiser’s principles, I’d start giving a range of grades, maybe 20% A, 20% B, 20% C, 20% D, 20% F. But of course that wouldn’t work. Not at all. I can’t do it because other profs aren’t doing it. But even if all of Columbia were to do it . . . well, I have no idea, it’s obviously not gonna happen.

Anyway, my point is that Princeton’s motivation may well be the same as mine: yes, by giving all A’s we’re losing the ability to give feedback in this way and we’re losing the opportunity to provide a useful piece of information to potential employees.

But, ultimately, it’s not worth the trouble. Students get feedback within their classes, they have internal motivation to learn the material, and, at the other end, employers can rely on other information to evaluate job candidates.

From that perspective, if anyone has the motivation to insist on strict grading for college students, it’s employers and grad schools. They’re the ones losing out by not having this signal, and indirectly if students don’t learn the material well because they’re less well motivated in class.

In the meantime, it’s hard for me to get mad at Princeton for allowing grades to rise, considering that I pretty much give all A’s myself in graduate classes.

P.S. Kaiser concludes:

The final word appears to be a rejection of quantitative measurement. Here’s Eisgruber:

The committee wisely said: If it’s feedback that we care about, and differentiating between good and better and worse work, that’s what we should focus on, not on numbers.

The wisdom has eluded me.

Let me add my irritation at the implicit equating of “wisdom” with non-quantitative thinking.

In grad school letters of recommendation are more influential than grades, and it is easier to maintain an information distribution with letters.

In grad school two Cs any you are road kill and out of the program.

In my view, a summary grade for a course should be an indicator of the degree to which the student has achieved the learning goals of the course. Those goals, and appropriate measurable objectives, should be made known before the start of the course, and they should drive both the instruction and the assessments along the way. Ideally, course examinations should be valid measures of the extent to which those goals have been achieved. Employers and others similarly situated would then be in a position to know precisely what knowledge and skills an applicant has (or, at least, had at the completion of the course). Of note, in a situation like this, if everybody in a class earned an A, that would represent the ultimate success of the course: everybody learned what they were supposed to learn.

There are other issues as well. In the context of a graduate program, the students have already survived several iterations of selection to just be there. The ability and motivation of graduate students is typically extremely high, and exhibit far less variation than one might see in, say, an undergraduate calculus course. With so little variation, it is extremely difficult to develop measures that discriminate achievement with much accuracy: the required level of measurement precision is extremely small and probably unachievable in practice. It is unsurprising that nearly all graduate students get As in nearly all courses. Were that not the case, it would raise questions about what the admissions committee is doing.

Finally, imposing a specific quota for different grade levels does nothing but create artificial competition, by artificially creating a scarcity of A’s. Competition can be healthy, but experience suggests that in the academic setting that kind of competition brings out destructive behaviors such as cheating, plaigiarism, and sabotage, at least as much as it encourages studying and conscientiousness.

None of this is a rationalization for just giving out high grades that don’t reflect real accomplishment, of course. And the ideal assessment approach outlined in my first paragraph is not highly prevalent, and is difficult to put in place. But I do believe that is what we should be striving for.

Another point along this line: grades shouldn’t be an *internal* measure of gradation in performance (ie. only within the given course), but rather a measure of the achievement of the students at the end of the course relative to an appropriate control, such as people who have not yet taken the course.

In our courses, do the assessment instruments and scoring systems for those instruments provide a valid measure of student learning and achievement (or whatever we want to gauge)? I’ve spoken to a number of folks who teach intro stats and it surprises me how often they haven’t thought much about this. They just reiterate the pedagogical decisions they experienced as an undergrad, without the same depth of consideration they apply to their own research.

It is good to start policy decisions on this by being very clear about the objectives of the grading system. Different institutions might have different objectives. I prefer criterion-reference grading, because desired objective is to provide a summative evaluation of student’s breadth of mastered material. A particular grade in my courses indicates that sufficient competency has has been demonstrated on a nested set of topics. I’ve had semesters where the majority of my class mastered all of the course content and I’ve given lots of As. I don’t want to spread out the distribution here, because it would not fit with my objectives. On the other hand, I’ve had semesters where lots of students didn’t do the work and didn’t perform.

I work with a lot of seniors who enroll in a research internship course. Many of them recently got an A in their intro stats course (that I didn’t teach), but they can’t explain (or recognize) the concept of sampling distributions. These students seem to have collected enough points to obtain an A without understanding much of the core material. This is one of the reasons I decided to move away from giving any points in my courses, and I’ve adopted a mastery teaching approach.

What should an intro stats student know, understand, and be able to accomplish in order to get an A? How about a B? Do traditional means of testing & scoring adequately gauge a student’s mastery on these things?

+1 for asking what is supposed to be being measured and asking faculty to think about it and also what their goals for students are. What kind of knowledge do you want students to leave your course with is really where to start.

In my experience most likely the final in intro stats involves doing a lot of “problems” involving sampling distributions but no essay question anything like “explain in your own words what a sampling distribution is and why it is an important concept.” And the latter is what you are asking them to do. If the test or other assessments are not asking them to do that and if the curriculum is not designed to teach them that then of course they are not likely to be able to do that.

How do we teach to achieve a goal like, “knows the material thoroughly enough to be able to (and know when to) apply it in subsequent classes and in the workplace”? How do we assess if we have achieved that? That is the real questions to ask about whether the grading system is useful.

The first part resonates with me. I do a lot of authentic assessment-there’s a lot of applied problems with real data. I know a lot of folks do the same. Students can apply recipes and get correct solutions, but often enough, in that context something will trigger some suspicion that a student may not have some of the connections figured out yet. I often give students an opportunity to demonstrate their understanding by following up on one of those typical “problems” with a question about how sampling error or sampling distributions are utilized/related to the problem, which many already have because I ask student to explain the concepts being used to solve the problem. Math is much more than just computation, but a typical way students are assessed students focus on memorizing formula and computing correctly. We can’t get good insight into what students are thinking if we don’t have them articulate their reasoning.

The last part, I think skips over a complexity that is at the root of original post.

If we are teaching a pass-no pass (or better yet mastery learning), the above question is all we need, but if we have to assign grades then we have to also ask what material should an A student be able to apply that a B student can’t, and what can a B student do that a C student can’t…?

Yes, in the end you do need to figure what letters to give, even though you might have larger goals that you could not even conceptually measure until meaningful time has passed. I’d much rather see tests with questions like you are asking, and I think a lot of times students will get lower grades because even if they can crank out the right answers their understanding may be weak (or in other situations their skills at communicating results may be weak). Then again if you stress those skills in your course and get better and better at teaching those maybe the grades will go up some over time.

So for assigning grades we can’t ask them questions 6 months later, but the questions are at least twofold. First, shouldn’t we at least once in a while go collect that 6 months later data anyway as part of assessing our own teaching? And second are there any leading indicators that we could use at the end of the course to predict that? That’s what I’m always trying to figure out.

I completely agree with you.

It seems to me that if one believes that grades should be spread out, then one thinks that grades are (mostly?) for comparing students to each other. But, why should teachers pit students against each other? If 50% of the students in my class are doing excellent work, then why should I not give all of them a grade that means “excellent”? Is it the job of the teacher to help companies (even graduate schools) determine who the best students are? I’m a high school mathematics teacher, and I don’t believe it’s my job to indicate to colleges which student of mine is best, which is second best, and so on.

I still don’t understand Andrew’s argument: If spread out grades are good why are grad students an exception? Why are grad students more lovable than undergrad?

Or is it just saying (a) change is inconvenient, (b) the transition might confuse outsiders who use the grade information & ( c) Why should I be the one initiating change, even if in the right direction?

If indeed students get adequate feedback within their classes & they have internal motivation to learn the material why not just get rid of all grades?

One downside to grading and GPA’s more generally is that I have seen many students avoid hard courses bc they are worried it will affect their GPA, even if they might have excelled at it. They just don’t want the risk.

My philosophy in graduate school was that — for the first time in my student life — grades should not matter at all. Ultimately what matters is your research, and that should speak for itself. I don’t think employers should be hiring PhDs for their letter grades.

However, at the undergraduate level I think grading to a curve is important, in part bc they have no research to speak of. But also bc it is unjust that students that work very hard and are smart should be lumped with those that don’t do so well.

Rahul:

My undergrads are just as lovable as my grad students. My point is that it’s hard to change the status quo, and particularly hard to change in the direction of giving lower grades. So, yes, it’s (a), (b), and (c) of what you write.

I’ve never heard that the Princeton policy applied to anything but undergraduate courses. I’ve never heard of a US grad program that had grades below C unless there was an extraordinary situation where the student never attended class, C is like F in grad school, any program that is admitting students who can’t get at least Cs in their courses is doing something dreadfully wrong in the admissions process and wasting faculty time.

“If indeed students get adequate feedback within their classes & they have internal motivation to learn the material why not just get rid of all grades?”

Grades are needed for subsequent selection processes such as additional schooling or hiring.

In the 1970’s, many US medical schools abandoned letter grades in favor of pass/fail, or honors/pass/fail systems. Those systems prevail to this day, but only as an illusion. Residency programs who hire newly minted MDs needed some way to distinguish the higher from the lower performing medical students. So, in short order, a covert grading system arose. In writing letters of recommendation, the Deans of students used a standardized set of 9 code words to summarize performance in each clinical course and to give an overall appraisal of the student’s potential. These 9 code words were soon universally understood by selection committees and corresponded fairly closely to the old letter grades (with + and -).

There were a few medicals schools that tried to buck the trend and didn’t use the covert grading system. At the hospital where I was on the selection committee, we simply didn’t even bother reading the applications from those schools unless the applicant had done an elective at our institution so we had first-hand experience with his/her performance.

There is a legitimate need for indicators that discriminate levels of performance among students, and if the overt grading system does not provide that, other, perhaps less valid, indicators will be used.

I’m a statistician in a medical education office right now and am really interested in this comment. Clyde, could you say more about this? I assume you are talking about the MSPE? Is there a source online somewhere that talks about these code words? Are you still involved in student selection now?

Here you have Harvard disavowing the use of MPSE “code words,” indicating that the practice exists elsewhere.

http://hms.harvard.edu/departments/office-registrar/student-handbook/2-academic-information-and-policies/222-medical-student-performance-evaluation-mspe-deans-letter

Thanks, Kyle, this is helpful!

I have to imagine that in context, Harvard’s choice to “buck the system” had no negative impact on student placement! Indeed, if anything I’d expect it to have improved student placement, in the sense that the people at the bottom of the class weren’t tagged as “low-performing Harvard MDs,” but simply “Harvard MDs.” There’s probably a lesson in there about differential impact of grade inflation at different educational tiers…

…and then there are institutions like Reed College, where one of my children went; they don’t give grades at all. Professors would write letters of recommendation that reflect their experience with students that they know quite well.

Wouldn’t work at a major institution like Texas, where I taught. Too many students, large classes, you just can’t know more than a small handful of them well enough to write such letters (at least in the undergraduate sphere).

I’ve been teaching at the graduate level for 10 years now, and I feel that inflating grades like this is a cop-out. The reason most professors do this might be to be liked more or because they don’t want to go through the hassle of grading.

I grade anonymously on performance in exams, and as a result every year I get at least one aggressive email from grad students along the lines of “this grade is not good for my career” (the sentence does not continue with, “so give me a higher one”, but that’s what is implied), or “I did all the homework, how come I didn’t get an A?” (answer: you got 66% in the final exam). Undergrads *almost never* do this to me.

Sheffield’s School of Mathematics and Statistics, where I’ve taken exams in the MSc in Statistics, also grades pretty harshly on the graduate level, and they give very detailed feedback on homework. I find the final grade on Sheffield exams informative and helpful as feedback, in a different way from the homework feedback. It tells me what I can do under timed conditions. It’s a reality check for me as a student, and a useful metric for others. For companies hiring statisticians, it’s a data point in the overall performance of a potential statistics hire. It’s not the whole story, probably, but a student getting 50% (a bare pass), vs 65% or 75% gives me some indication of how much they know, as an employer. I might hire someone with a lower grade if they have other qualities I like (maybe they can write and communicate really well and that might be important to me).

PS I suspect I would not get such emails if I were a large white male with a prototypical professorial look (I was recently asked if I am a taxi-driver). So I’m curious to know if grad students do this to prototypical-looking professors too.

Shravan:

I’ve never been mistaken for a taxi driver, but many times when I’m in a store, people will come up to me and ask for help. Something about me screams “store employee.”

Cool! I’m glad I’m not the only one. I have a story like that one: once I was standing outside a public toilet in Hamburg, waiting for my wife, and an oldish guy came out of the men’s toilet and handed me 50 cents, thinking I was the toilet cleaner (the actual cleaner did come over quickly and grabbed my 50 cents off of me—that’s mine, she said). Then the guy asked me what I did for a living. I told him I’m a professor, and he looked pretty disbelievingly at me. When I look in the mirror, I do see where he’s coming from, though ;).

Is it a coincidence that this was Germany? I wonder….

Very hard to say, as I have never worked anywhere else in my life as a professor. I used to be a patent translator in a Japanese law firm in Osaka before I became a linguist. But the Japanese have a baseline level of politeness that puts Germany (and Europe in general) off the scale in comparison. My wife and I lived in the mid-west in the US (Ohio State) when I was doing my PhD there, Ohioans are about as polite as the Japanese (at least in person—an Ohioan inside a car is a different matter).

Also, in Japan I used to be always dressed up, suit and tie, because I worked in a law firm. Here in Germany, I often wear T-shirts and jeans. I worked in India as a Japanese->English/Hindi translator/interpreter, but I was in my 20s then, so that’s obviously not possible to compare with my German experience.

The Germans are the rudest and most aggressive group I have ever encountered in my life. Just last week my wife was taking our son (8) to school in the U-Bahn (Berlin), and a man was backing into our son, crushing him against the wall. My wife asked the man to please not push against our son, and he turned to her and said, “Are you out of your mind? Do you live alone? I am going to f*** you.” That’s a pretty normal experience for us in Germany. (She’s white Australian, by the way).

The Germans also seem quicker in assuming sterotypes. i.e. more likely to be surprised by dark-skinned-man-in-tee being in a demanding / intellectual / high-pay profession.

Yes, that tendency might be true of the German population. In general, the Japanese assume less when they encounter a stranger, they tend to probe for more information first. It helps that in Japan a formal introduction begins with an exchange of visiting cards, that immediately sets the parameters.

In Germany, I too often have to assure people repeatedly that yes, I have a chair in university, I am a “real” professor, yes I am a Beamter auf Lebenszeit (civil servant for life), and yes, it really, really is a permanent position. There is one dialysis nurse in my (now former) dialysis clinic who used to ask me this question every six months over two years. He just could not believe I was in a permanent position. I have had long conversations with him where he’s questioning me, looking for the catch. He’s not the only one I’ve had such conversations with.

In Germany, there is a (former) politician called Sarrazin, who wrote a book recently (in German) which basically argued that Germany was becoming dumber because of the influx of Turkish immigrants, who supposedly have a lower IQ. Apparently, Turks are genetically predisposed to having a lower IQ. This position, which is implicitly endorsed by the Harvard by producing Jason Richwine as a bona-fide PhD, captured the public imagination when the book came out some years ago. Sarrazin made 3 million Euros from the book’s sales in Germany, it was a bestseller. Bookshops in Berlin stocked the book at the entrance for easy access to shoppers.

I never read it because I could not bring myself to put money in his pocket. But I read the reviews and responses from scientists in newspapers. From the fact that this book was a best-seller in Germany, it might follow that far too many Germans buy the stereotyping idea.

I should add that there are nut-case right-wing extremists on the political scene in Japan too. I didn’t follow the scene there too closely though, so I don’t know how popular racial superiority ideas are in Japan. It certainly doesn’t percolate down to day-to-day interaction though.

In Germany, I routinely meet professors who vote left but say the same things against Turks that Sarrazin does. Many of these otherwise left-voting professors consider brown-skins as a type, with common characteristics. Just a few days ago a distinguished German professor in Potsdam was commiserating with me (for the fourth or fifth time that my living arrangement has come up with him) for “having to live” in a working class district of Berlin, where so many “terrorists” (this refers to Arabs and Turks) live. I told him it’s actually fine for me, because I look like a terrorist myself (and when I’m trying to solve a stats problem in my head while walking down the street, I really do look like a terrorist; people move a few feet away from me).

There is a recent study from the the Friedrich-Ebert-Stiftung on the spread of´stereotypes and racism in Germany which implied that those have spread more into the political “centre”, have become more mainstream. I did not really check it myself yet and I don’t think there is any non-german version: http://www.fes-gegen-rechtsextremismus.de/inhalte/studien_Gutachten.php

Shravan:

“In Germany, I routinely meet professors who vote left but say the same things against Turks that Sarrazin does.”

What do you consider “left” here? The SPD (German Social Democratic Party)? It would suprise me a lot when those guys vote The Left (Die Linke), although there are also some people with racist attitudes in that party, too.

Hi Daniel,

I realized as soon as I wrote “left” that it would cause confusion. I meant center-left parties like SPD; I think of them as roughly along the same point on the x-axis as the US Democratic party (though as an outsider to Germany maybe I am wrong). For me center-left is about left enough. The Linke party always struck me as borderline lunatic fringe (they quote Lenin and Marx on their election posters, for example).

Sarrazin belongs to the SPD (a fact that astonishes me). The people I said vote left: I meant the people who vote for SPD. Sorry about causing confusion! After the Sarrazin incident, I myself stopped voting for SPD because they didn’t kick him out after his book came out.

Happens to me too. Most frequently in hardware stores. (???)

I get asked for directions a lot, even when I’m out of town. Riding the subway in New York City, staring at the system map like the confused soul that i am, people will ask me for directions.

Last month, I was cycling around Louisville in an Afro-American neighborhood. A carful of Afro-American women pulled up next to me and asked for directions. I explained that I didn’t know, since I was just visiting from Chicago. They said they were from Chicago also, and we had a good laugh about that.

Shravan Vasishth, now that I’m teaching I also get the same emails (or personal visits from people who haven’t visited my office all semester). If it’s any consolation, I’m stereotypically tall old white male (although that ia actor George Reeves in my avatar photo)

Note: I wrote my last paragraph above before Shravan Vasishth made his more expanded remarks above. I certainly experience nothing as a white male in America to compare to his detailed experiences.

Walking around London in 1980, I got stopped a half dozen times in four days by people asking directions. The first time I said I was from L.A. After that, though, I realized that being a tourist, I was carrying an enormous map that natives wouldn’t be carrying, so we’d look over my map until they had the complex route (and all routes in London are complex) figured out.

Never wear a red polo shirt when shopping at Staples! Still, I answered 2 out of three questions correctly.

A few things:

1) On grad school grades: Who actually gives a $#!&? Are there people who pay attention to them? Speaking as someone who hires people with M.S. and Ph.D. degrees, grad school grades are of zero interest. What matters is their research – good publications are a plus – and recommendations.

2) Provide students with meaningful feedback on their work should be a goal of professors and other instructors. “Provide meaningful feedback” isn’t a vague goal. The devil is in the details but it’s not a vague goal.

3) In and of itself, a letter grade is not particularly meaningful feedback. (And if everyone gets an A or a B then it’s essentially meaningless.) I concur with Clyde Schechter above – “a summary grade for a course should be an indicator of the degree to which the student has achieved the learning goals of the course”.

4) I also concur with Clyde’s comments re the ability and motivation of grad students.

5) My first two years as an undergrad I attended a very small college. Classes were small enough that professors gave written mid-semester reviews as well as end-of-semester reviews along with grades. That feedback was meaningful. (You were also free to see profs during office hours. All that I interacted with were committed to providing honest feedback and helping you work things through.)

In Engineering those of us who applied for Industrial Jobs after the PhD, the grades were definitely important. Almost all hiring firms asked for your GPA, had a minimum limit for applying & presumably took them into consideration during hiring.

I work for a large engineering and manufacturing company. My experience is that grades are something that HR may use as a filter but that hiring managers don’t care – at least I’ve never met one who does. Perceived quality of research and your recommendations are what get you an interview. If you get an interview then it’s your ability to communicate what you’ve done, interpersonal skills, and willingness to tackle new problems that are the biggest factors in securing an offer*.

Bad grades are a red flag for an undergrad and good grades are a mild positive but an independent research project – or even a few summers working in a lab – are much bigger plus. For grad students though, the presumption is that they’re motivated and skilled in their chosen area. While it would certainly be a red flag if someone failed a bunch of grad school classes, my presumption is that the quality of their dissertation is a much more meaningful indicator of their skill than class grades are. The bottom line is that if I going to hire someone then I need to be confident that they can handle themselves outside of a classroom. I need them to be able to apply the knowledge they acquired in the classroom. For better or worse, grades aren’t a reliable indicator of that.

* If you’ve been working for a while then you also need to withstand cross-examination re expertise you claim on your resume.

I agree. But I get the feeling that (unfortunately) HR seems to be getting relatively more say in hiring decisions over the last decade or two.

High school grades affect college admission.

College grades affect grad school admission.

But graduate school grades?

1. They don’t lead anywhere.

2. Almost 40 years after I left graduate school I have never had anyone ask what my GPA was in my PhD program.

3. When reviewing resumes I paid almost no attention to grad school grades, other than to wonder why someone bothered to waste a line on their 1 page resume. Put something interesting on there to talk about. I’m not going to talk about your GPA. I might ask about your Eagle Scout project or even what you learned from being a shift manager at Starbucks.

Then why do we give grades at the graduate level?

For me, as a professor, students’ performance (of which the final grade on the exam) in my stats course have historically served as an important filter. This is because not every MSc student is going to do a PhD, and not every one of them is going to do a PhD with me. The quality of students’ subsequent research as MSc or PhD students is highly correlated with their grades.

In fields like statistics and computer science, many graduates with an MSc will enter the workforce without doing a PhD. Do grades matter in those cases? I don’t know at all; this is an information question.

In Sheffield, entry into the PhD program would depend more on the quality of the MSc thesis, less on the grades. For an MSc student, the grades can be interesting for his/her own information; at least, they have been really interesting for me as a student. I thought I really understood the Bayesian stats material well; but then I did the exam (3 hours, notes allowed, but no textbooks or other materials). I got 65%, which told me I was basically OK (this is an upper second). Most of my mistakes were due to my not knowing how to use a hand-held calculator (apparently I can’t do basic arithmetic operations unless they’re done in R).

One thing I should say is that here in Potsdam we give grades for the PhD dissertation and defence. I find that absurd. At the PhD level, it is nonsensical to make a distinction between summa cum laude and magna cum laude etc. You either pass or don’t pass. In Germany we waste a lot of time and energy trying to figure out if someone is a summa or a magna or whatever, where the real currency at this stage is their publication record and their abilities as a researcher. All those metrics will automatically reveal themselves in the cold, hard reality of being an academic in free fall post-PhD.

My experience with undergrad grading has been different. At my current employer -and during my own studies- high grades are nearly always reserved for the group that does really well. For example, about 5% of the graduating students gets the highest honor at my current institution (equivalent to average of A- I guess).

In my undergrad they had a finer grade scale and highest honors were typically awarded to about 1 out of 100-200 students (changes a bit by field; more prevalent among mathematics and never in law).

When an undergrad here does “well” but not exceptional and gets his grade sheet converted to letters (maybe to apply to a US grad school), he’ll have a lot of B’s in there… It is often said that the unwillingness to go along in grade inflation puts our students at a disadvantage to other schools.

‘Fortunately’ grade inflation is slowly creeping in.

If everyone gets an A, why give grades at all?

The question is, is a grade a measure of syllabus coverage, or is it an percentile band? At the moment, it’s fuzzily assumed to do some of the job of both.

So why not simply give a two-headed grade, with one letter and one number: A0. A-F indicates the degree of syllabus coverage, by whatever measure, and 0-9 indicates which percentile within the class you fell in.

The advantage of this comes when you compare a B1 at school 1 with an A3 at school 2. Which one really did better?

Separating the ‘absolute’ and ‘relative’ aims of grading is a great idea, and doing something like what you propose has come up in nearly every conversation I’ve had about grades. Which leads me to wonder: is this actually done anywhere? Why doesn’t it happen?

Beyond the question of whether letters are meaningful or not, I remember reading about one issue that scarred me while I was a student in Canada. After this case came up, many others awkward subjective policies were revealed in the press. http://news.nationalpost.com/2012/11/13/student-sues-concordia-after-allegedly-having-his-a-dropped-to-a-b-due-to-a-grade-quota/#__federated=1

As a professor at a major public university, I am becoming increasingly uncomfortable with grading as a way to distinguish students from each other. This seems to me to be an old model of education: let’s separate the wheat from the chaff.

What if college education became more like job training? The goal there isn’t so much to distinguish between trainees, but to help all trainees master the material. In college courses, students could be given multiple chances to master the material (e.g., taking tests multiple times) until they demonstrate mastery. In this model, all students could get A’s if they have mastered the material.

This would be a great idea if the students were to intersperse the multiple test opportunities by going back and working on their understanding. But I fear at least some might study to pass the test, that would not lead to mastery but to getting through the exam. One school of thought in Potsdam is: graduate students are there of their own volition, they are not children. Just provide them with the material. The expectation is that they will do the best they can, because *they* chose to come here. I can see the motivation for that argument. In any case, the acid test (for better or worse) for a grad student (PhD level) is publication record.

> In any case, the acid test (for better or worse) for a grad student (PhD level) is publication record.

This was a subject of discussion when I was grad student 20+ years ago. Write your dissertation and then use it as the basis for publications or write multiple papers and then integrate them to create your thesis? (I took the former route.)

I developed two undergraduate astronomy courses using the Keller Plan (https://en.wikipedia.org/wiki/Keller_Plan) for a number of years. The Keller Plan has the goal of having the students master the material, which has been broken into a number of small units. The students showed mastery by passing a short test on each unit with essentially a perfect score, and if they did not pass, they would study more and then be retested (with a different test that covered the same material) until they demonstrated mastery of the material of that unit. Students were assigned undergraduate tutors who had previously taken the course, and whose task was to answer student questions and guide their study, as well as to grade the unit tests and discuss the results with the students, particularly with regard to items that needed more work. The tutors were unpaid and were rewarded with credit for a three-hour course that they could take only once. We had weekly meetings with the tutors. There were ten students per tutor.

Two other professors taught these courses over the years after other duties interfered with my continuing them (large NASA project, department chair). They continued to teach the courses until one passed away prematurely, and the other left the university for another position.

I can imagine that the testing could be automated by a computer selecting appropriate questions from a test bank, but we did this by having a number of pre-prepared tests for each unit so that when a student had to repeat a test it could be done multiple times by selecting a different pre-prepared test.

There must be many ways to implement a course structure that takes Michael’s goal of mastery as its goal. This is one approach that we found quite successful.

in all fairness, it isn’t that bad to have 90% of the weight on 6 of the 7 data points. When someone gets any kind of C,D or F at Princeton it means they got bad advice and took a class out of their league or got depressed or something. Basically it is one big category “outlier.”

What bothers me is t

If you have plus and minus grades (as most elite institutions do), then you’ve got more than 5 grades. For example, when I taught at Duke the average student earned something like a B+ in my classes (because they did the work at or above the standards I set for them, consistent with how I graded when I taught at less selective institutions) but having plus and minus grades meant I certainly wasn’t putting most in one grade or another. Even assuming that the “gentleman’s C” was a D or worse elsewhere (which is a stretch), and there’s no A+, you’re still looking at five distinct, passing grades above a C: A, A-, B+, B, and B-.

By contrast, where I teach now, a far less selective institution, we effectively have four passing grades (A, B, C, or D) since we lack plus or minus grades.

I actually think that you don’t need more than four passing grades. Where I taught (Texas) plusses and minuses were not recorded or counted. The qualifying plusses and minuses reflect precision that just isn’t there. It’s not like “B” is a well-defined level with B+ and B_ well into the tails of the distribution of B grades. I can distinguish between excellent work and good work; I can’t distinguish grades of “excellent” or “good”.

The problem with grades is that almost no instructor sits down and carefully considers what a student would have to learn and demonstrate was learned for each letter grade. After that, the instructor should make the rubric part of the syllabus for the course and construct exams appropriately.

Failing that the situation is a crap shoot. Moreover, unless this is done on a national scale, there is almost no point to it especially for selective institutions such as Columbia or Princeton as compared to more run of the mill places. In chemistry, the ACS publishes nationally normed exams which are and are not used depending, often depending on the ability of the instructor to absorb reality. They are certainly not used at places like Columbia or Princeton although Eli could be disuaded about that last point.

That’s a thought. (FWIW, my department wasn’t ACS certified – or whatever ACS’s designation is – so I never saw the exams. My GRE scores were okay though so presumably my undergrad instruction was on-target.) I’d think that normed exams would be much more straightforward in science and engineering than in humanities, the social sciences, or arts however. Also, and more significantly, how well is a normed exam going to test a student’s ability to apply what they learned in the classroom? Any problem of significance is going to require you to chew on it for days, weeks, months, maybe even years. A multi-hour exam isn’t going to test one’s ability to tackle really hard problems. Someone who scores well on a multi-hour exam will probably make a good lab tech but may or may not have what it takes to be a good researcher. Timed exams are a reasonable way to test vocational training but they are at best unpredictable tests of people’s ability to think creatively.

Huh. That is exactly how it is done at my institution.

The total mark (which is, eventually, out of 100) is an average of the marks for four different criteria. For each criterion the tutor may choose grades from something like Excellent, Very Good, Good, … (which are then mapped onto numbers to make the whole thing work out into something that looks like percentages). There is a list of five-ten things which are to be covered in each criterion and it is clear that if the student has achieved all these, they will get excellent, some, perhaps, Good, etc.

I am studying languages and these criteria are general things which apply to all assignments (with variations for written/spoken assignments/interactive exams) such as use of appropriate language, complex structures, suitable source material, rather than specific points to learn.

Meaningful must assume some population, but should that be the class, department, school, higher ed, or general pop? Arbitrary strict levels can be demotivating, but relaxing, no need to work hard when you know it won’t make a difference.

My vague impression is that the +s and -s are now highly important: e.g., a B- today is like a D in 1960s. If that’s consistently true, then that’s fine. But if it’s not consistently true that’s a problem. Plus, what do we do after the next round of grade inflation.

Another recent trend is the elimination of A+. I haven’t figured out the reasoning behind this one, especially in the face of the amount of As being doled out.

I’m coming late to this thread. Update: Princeton faculty approved the plan to abandon the “deflation” policy; they predict that grades will not drift upwards (this is too good not to put on record!)

On grad school grades: Haven’t given much thought to these but I agree that they have little impact.

On the amount of As: If you look at the curve over time, do we seriously believe that 80-90% of all undergrads across all classes deserve As or Bs?

On students competing with each other: I don’t understand this argument at all. Students who want to get into medical school, PhD programs, etc. are allowed to compete but not the average student? It’s ok for students to compete for summer internships and jobs on Wall Street? When they join a company, they compete with other analysts for promotion and salary increases. What’s wrong with fair competition?

On why we inflate grades: Unfortunately, the students/parents have figured out the game. Arguing over grades is a loss of productive time for faculty. As I pointed out in the blog post, we face a prisoner’s dilemma: being the odd person out giving lower grades while others inflate grades is not an equilibrium.

When students apply to a job they’re competing with people drawn from a large applicant population. when 8-12 undergraduate students compete within a single class at a single university for a full-spectrum evaluation… you don’t have the actual measurement precision to really rank these students in a meaningful way, and furthermore you incentivize gaming the system by under-cutting the other students in the class etc.

It’s entirely reasonable to me that if I had say 12 senior engineering students in a course on say wood construction design methodology, and I had them work in groups of 3 on a semester long project… that forcing one group to get an A, the next to get a B, the third a C and the final a D would horribly distort the experience. Most likely 3 of the groups will in fact deserve an A and the third would deserve a B, or maybe even all four groups would deserve an A. They just don’t get to be senior engineering students without being able to do a good job on such a project.

Even in courses where there is no group work, it wouldn’t surprise me that at the 3rd and 4th year 80% of students really would deserve an A.

In the first 2 years… things are much different, but GPA doesn’t tell the whole story there either… we really have a time-series and extrapolation problem: how much does it really matter that a student got a C+ on a 2nd year dynamics course if they are later getting an honest A in a computer-aided dynamical structural modeling course as a senior?

Things may be different in programs that don’t build on earlier concepts as much. If you take 4 history courses, each one about a different period and location, you might be able to say that each one of these stands as an “independent” data point. If you take a first year course in basic mechanics, and in your second year take one semester of statics one semester of dynamics, and in the third year you do design of structures involving statics and dynamics, and in the fourth year you do a series of projects applying design principles using statics and dynamics to real world projects taken from local construction plans…. it’s not in any way a set of N “independent” courses.

My perspective on this question is probably different from everyone else’s: I teach at a school (at both undergrad and grad levels) that does not assign grades. Every student receives a narrative evaluation, and their transcript can get thick, fast. My general impression is that students don’t suffer for this; for instance, we are very successful in placing undergrads into grad schools. On a practical level, the disadvantage recruitment committees face with these thick transcripts is more than offset by the much more detailed information they contain, and which can be distilled into quite persuasive letters of recommendation.

Nevertheless, we are regularly called upon to convert these narrative evaluations into letter grades — for some programs one size must fit all. This is always a source of frustration, but useful as a reminder for why grades fall short.

The simplest explanation is that student performance is a vector, and a grade is a scalar. My courses work on many skills simultaneously. A handful of students master each one of them, and a somewhat larger number fails on each one, but the vast majority have a mottled result. My narrative evals follow a rubric in which I convey these multiple outcomes, but they also do two other things. First, they try to provide an integrated account, portraying a whole student. This integration is not evaluative (it can’t be summed up by a letter grade), but it tries to provide useful information to future teachers/employers regarding the student’s approach to learning, work habits, depth of engagement with the subject matter, etc. that goes beyond a simple description of past performance. These are nearly always presented in a positive light, since nearly all of my students are well-intentioned and likable, but the underlying story is honest. Second, I give the basis for my evaluation(s): I describe the work performed by the student and explain how I inferred their accomplishments from what was visible to me. This “epistemological” dimension is important, IMO, since we faculty too are ultimately sampling and drawing inferences. I am on more solid ground about some assessments than others. I can convey this directly through a narrative written in the first person.

Now, the interesting thing is that, even though we don’t have letter grades, we still have grade/evaluation inflation! The reason is that across much of the college there is an implicit contract between students and faculty: the faculty will take a relaxed attitude toward teaching (not actually reading a lot of student work, for instance), while students are grateful for the anticipated glowing evaluations. It is an easier life for both of them. I should be clear that extreme, out-and-out cases of mutual gifting are rare, and the problem reveals itself in shading on both sides — softball evaluations, somewhat relaxed approaches to teaching. It may well be the case that the advantages of narrative evaluation are so large, not only for evaluation per se but also how it structures teaching, that these “soft” courses are still better than what students typically find at other schools.

From my point of view, a negative aspect of this inflation is that it externalizes costs onto those of us who don’t participate in it. I *do* read student work carefully, and my job is more difficult because prior faculty didn’t, and didn’t work with students to improve their writing skills, for instance. And the math side of things…..well, don’t get me started.

Bottom line: what I worry about most is not the inflated grade or evaluation itself, but the implied quid pro quo.

This is an interesting perspective. I really like the vector-vs-scalar thing.

Regarding quid pro quo, Val Johnson’s book on grade inflation devotes a lot of real estate to that topic. Actually, I’m most of the way through it and I feel like that’s mostly what the book is about.

Great post and great comments.

I’m surprised nobody mentioned the issue of honesty. As someone who went through public and private schools as well as attended grad school and was a teaching assistant, I have been through classes with harsh grading, easy grading, and pass fail. They each have pluses and minuses but I think these are second order issues. I have always thought the first order issue about grade inflation is dishonesty: it’s deceptive to give everyone A’s because it implies that they are all excellent when it generally isn’t the case.

Sure, people in the know are calibrated to the institution and can usually figure out how much information grades convey but there are plenty of people (e.g., employers) who are not appropriately calibrated. Grade inflation lies to such people.

I think the reason that grade inflation occurs is because students clearly have an incentive for grade inflation, professors don’t like dealing with the hassle of giving more meaningful grades (both because it requires effort to sort students on ability and because students object and make trouble), and institutions have an incentive to keep the paying customer happy. Given those strong pressures, I suspect grade inflation will generally continue (at least at expensive private universities).

But here is my key point which I would appreciate feedback on: If universities cannot be honest about one of their most basic functions (grading students), how on earth can they expect students to be honest and not cheat on exams, inflate qualifications to gain acceptance to the university, and shirk unpleasant assignments?

Thanks,

-Enonymous

Is the goal education or filtering? If it is education, then mastery is a better goal. If filtering, then handing out grades by IQ and dispensing with the class would be more ‘honest’. There is nothing ‘honest’ about filtering.

I have been thinking a lot about grading systems over the last couple of months for the purpose of understanding class rank, and it’s becoming clear to me that the whole enterprise rests on measurement: how closely do your grades correspond with the construct you care about, and how well do they correlate with each other? I guess from the standpoint of statistics this is an embarrassingly banal observation. But from the standpoint of a person who’s spent a frillion years as an educational “consumer,” it’s kind of surprising, given that left to their own devices, professors pretty much seem to wing it. The vast majority won’t have had coursework about how to design a decent test. As far as I know nobody’s doing any data mining to correlate performance on their tests with some down-the-road outcome they care about. Hell, for college students who go straight into the workforce, there basically is no down-the-road outcome we can all agree matters — that’s part of what is so charming and so maddening about the American higher ed system. As I noted above I’ve spent the last year+ working in medical education, where the expectations of what a graduate should look like are a little better-defined, and (at least here) there’s a little more input into curriculum from people who have explicit training in education. But even here, designing good assessments for this context is still not a completely solved problem.

To bring grade inflation back to the discussion, I guess it’s a given that if you have significant grade inflation, your grades won’t correlate well with the construct you’re trying to measure. But the inverse is not true.

P.S. Incidentally I am looking for resources that talk about putting confidence intervals around ranks. I already know about the most recent NRC rankings of doctoral programs, which were expressed as 95% CIs, but am wondering what other approaches people have devised. Anyone have suggestions?

Not sure, whether this is what you need but here goes. There was a cool exercise on rankings I did in a Bayesian statistics course, and working out the uncertainty for each rank (hospital deaths). Here is the code (JAGS, this is before I learnt to use Stan).

https://gist.github.com/vasishth/d9c899fc01343901e470

Wow, that’s great — thank you so much! I’ll play with it.

Is this a course you taught, or a course you took? Is there a syllabus online? I’ve not had any instruction in Bayesian analysis besides reading this blog. (I do use R, though, so I’ve got the computational part down.)

I also teach this now to grad students in Linguistics, but I did a course as a student in the MSc programme in Statistics at Sheffield’s School of Mathematics and Statistics. They offer the whole MSc online (you have to go to Sheffield for the exams every year). The syllabus is online: the level 6 courses are graduate level.

http://maths.dept.shef.ac.uk/maths/module_list.html

The Bayesian methods course is here:

http://maths.dept.shef.ac.uk/maths/module_info_1421.html

I keep notes on the courses I did here:

https://github.com/vasishth/MScStatisticsNotes

These notes are not complete yet.

The Sheffield classes are great, I highly recommend them.

There’s also a nice course I did in Copenhagen back in 2012 or so. It was one week of Bayesian statistics, really great and totally worth it.

http://bendixcarstensen.com/Bayes/Cph-2012/pracs.pdf

My problem is that the arguments about the distribution of grades overlooks the difference between assignment grades based on *relative* performance (10% A, 20% B, 40% C, 20% D, 10% F, regardless of the average level of performance)and assignment of grades based on meeting a standard of performance (which is, after all, the idea behind mastery learning).

Suppose I have a set of concepts/theories/whatever that I want students to master. I devise instruments that measure their mastery accurately. They all master the material. Why should I distinguish “levels” of mastery?

I know that some people will argue that this has not (ever? generally?) happened in (any? all? most? classes. Their evidence is that the typical grade distribution has shifted upward. That shift could equally be evidence that we (as faculty or as students) are doing a better job of teaching/learning. In most cases, right now, we do not have evidence as to which is occurring. Kaiser Fung *thinks* he know–neither teaching nor learning has improved. But that’s an assumption, not a fact.

On the other hand, neither do we have a lot of evidence that teaching/learning has improved.

So there’s a lot of space out there for someone to do something useful, instead of crying “Grade inflation, bad!” or “Faculty control, good!”

I basically use a version of a mastery model when I teach research methods. Since I don’t have the selection issues that Princeton and Columbia have, my students typically rather nicely sort themselves out into those who have mastered everything, those who have mastered maybe 80%, those who have mastered most, and those who really haven’t, whether because they don’t get it or because they are distracted by other things (as mentioned above they could be depressed or have too many courses or in my case they have work or family issues or really not have the preparation needed). The time limit of the semester helps with creating that distribution. And if 100% of students learn something perfectly, that’s great, but maybe you pitched it a little low so next time adjust. (Though because I do many pieces I do have some I expect 100%, especially content early in the semester.) It does require a lot of rethinking if you are used to a different model and in my first week of the semester optimism I always think maybe they will all get 100% and then won’t I be in trouble for having all As, but it has never come close to happening.

I really find it fascinating that all the ways of thinking about data and measurement that are applied to most topics go out the window when talking about teaching. I think all the time about two questions, 1. How can I actually know if students understand the material? 2. How can I know if I had anything to do with whether students understand the material?

If your students do not get an A, either you selected students inadequate for the course or you fail in teaching. Small grades are for those admitted on “might work” basis with “didn’t work in the end” result.

Anyway the only two grades worth working for are 100 or 60.

100 :you master the subject.

60: good enough for most jobs.

80: you worked too hard in a subject you don’t master.