Robert Grant, Daniel Furr, Bob Carpenter, and I write:

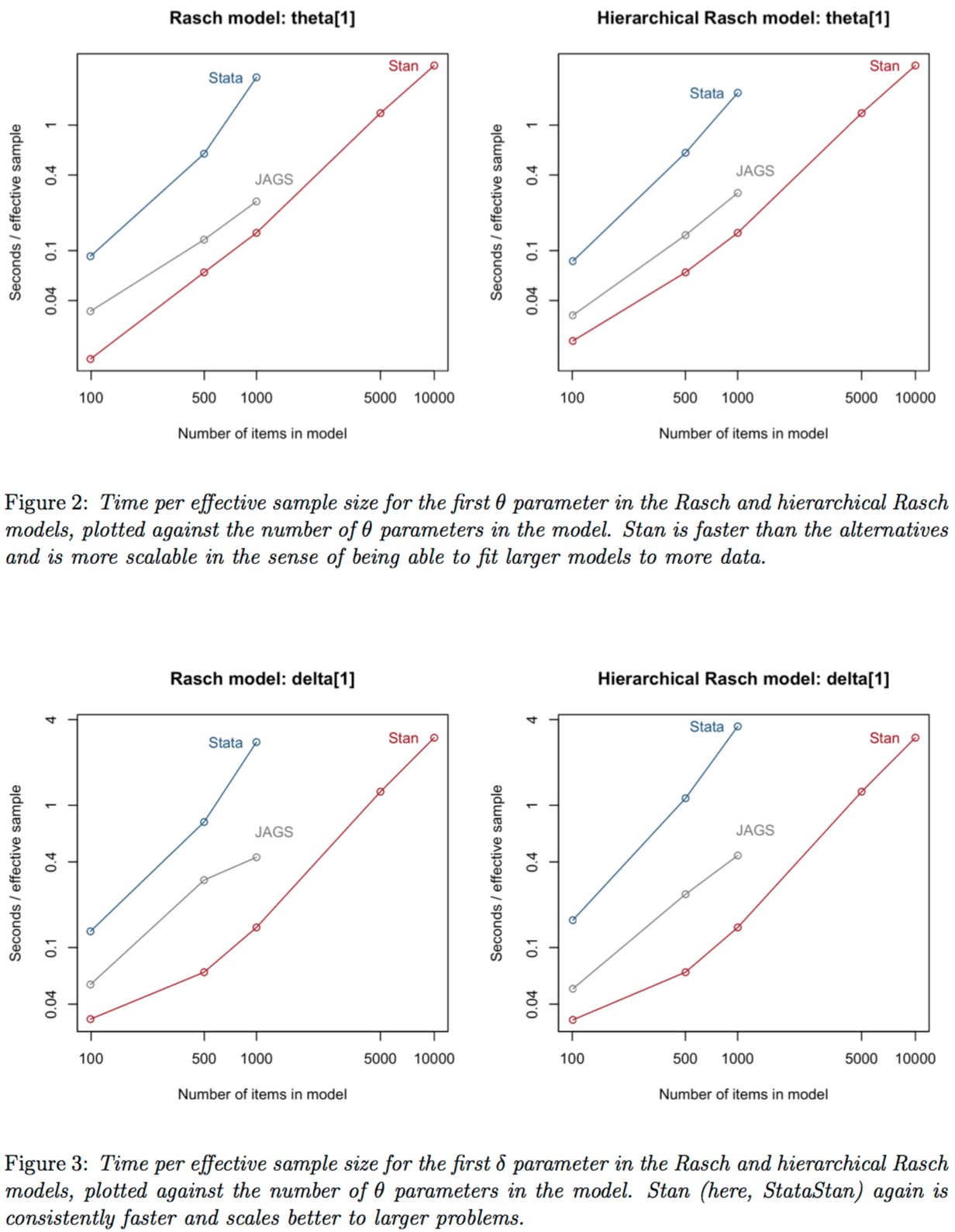

Stata users have access to two easy-to-use implementations of Bayesian inference: Stata’s native bayesmh function and StataStan, which calls the general Bayesian engine Stan. We compare these on two models that are important for education research: the Rasch model and the hierarchical Rasch model. Stan (as called from Stata) fits a more general range of models than can be fit by bayesmh and is also more scalable, in that it could easily fit models with at least ten times more parameters than could be fit using Stata’s native Bayesian implementation. In addition, Stan runs between two and ten times faster than bayesmh as measured in effective sample size per second: that is, compared to Stan, it takes Stata’s built-in Bayesian engine twice to ten times as long to get inferences with equivalent precision. We attribute Stan’s advantage in flexibility to its general modeling language, and its advantages in scalability and speed to an efficient sampling algorithm: Hamiltonian Monte Carlo using the no-U-turn sampler. In order to further investigate scalability, we also compared to the package Jags, which performed better than Stata’s native Bayesian engine but not as well as StataStan.

Here’s the punchline:

This is no surprise; still, it’s reassuring to see. (The lines in the graphs look a little jagged because we did just one simulation, from which the results are clear enough.)

Stan’s real advantage comes not just from speed but from flexibility—Stan can fit any continuous parameter model for which you can write the log-density—and from scalability: you can fit bigger models to bigger datasets. We’re moving closer to a one-size-fits-most data analysis tool where we don’t have to jump from program to program as our data and modeling needs increase.

I wouldn’t necessarily say these are the most fair/appropriate comparisons given the different stages of development/maturity of the different platforms. The Bayesian tools in Stata were only implemented in the most recent release of the software (e.g., < 2 years old). However, its still nice to see some of the comparisons. Is the code from these performance tests going to be released? The typical way that Stata parameterizes and fits a Rasch model is computationally different from other IRT software (e.g., treating items as nested within students and theta as a random effect), so it isn't clear what exactly is meant by the hierarchical Rasch model here (e.g., are students nested within some other context?).

Billy:

Sure, we can definitely post all the code publicly.

Regarding your first comment: StataStan already exists and is flexible, scalable, and fast, so it’s not at all clear to me why Stata would want to build its own inference engine: they’d basically be putting in a lot of work to fit just a few models that we can fit faster in Stan anyway. I think it would make much more sense, if they’re interested in developing Bayesian inference tools in Stata, to work on some interface that would allow users to express various models in a Stata-like syntax and then have them fit using StataStan. Best of all worlds. For Stata to build its own Bayesian inference engine would like for me to try to build my own computer from scratch and then write my own text processing software. I’d rather just buy a Mac and then use Emacs, Latex, Word, etc.

I agree, it is a bit unfair to put free software built by a rag-tag bunch of academics mainly working in their spare time up against the product of a professional software company whose software sells for thousands of dollars (single use perpetual licenses for non-academics are over US$1500 and are restricted to a single core; the multi-core license is US$3000; or you can pay half that per year if you prefer).

The right way to calibrate isn’t time so much as how much was spent building the software. It’s hard to cost out given that Stan’s embedded in our research and Stata’s Bayesian software’s embedded in the rest of Stata. But I could believe we’ve put in more time and effort all told.

And some of us have professional programming backgrounds. So that “rag-tag bunch of academics” could be challenged.

More seriously, though, competitive evals like this are hugely challenging to carry out. We bent over backwards to give every advantage to the other systems; a simple reparameterization would’ve doubled our advantage, but Andrew wanted to be more fair and consistent and use exactly the same Stan code regardless of data size.

When Andrew originally asked me how we’d stack up, I said what I usually say — Stan will be on the order of the half as fast to infintely faster, depending on the problem. The biggest win for Stan is in much more complex problems that will simply defeat the more traditional sampling methods.

I’ve used stan on problems with thousands of parameters, and hundreds to thousands of data points, with hierarchical complex models involving various special cases, with biology data involving decompositions of things into measurement error per-lane, per gel machine, per pipetter, per plate, per sample…

It just eats up anything I give it, and I don’t think I’ve even once gone for coffee during a run. I get useful results in typically 10 minutes or less for any problem I’ve thrown at it so far. It is quite fantastic.

When things are slow I typically just run a small set of samples, like maybe 200 instead of 5000, and honestly in many cases those 200 samples are good enough for my purposes. Hamiltonian Monte-Carlo is awesome.

I’ve had some problems that will take upwards to 1-4 hours. But that’s with big data sets with hundreds of parameters.

I’ve had some problems where a naive implementation takes a LONG time, and then proper vectorization and reparameterization makes it run much more reasonably. I think that’s one thing Stan practically requires is that you think about efficiency in implementation, because it can be orders of magnitude different to write a loop vs do some vector operations and in part that may be due to the auto-diff stuff.

In any case, while I’m sure it’s possible to choke Stan handily, it doesn’t happen for lots of real-world cases even when the models are quite complicated compared to something you could do in lmer or something like that.

It would be fun to come up with a minimal model that makes Stan choke.

i.e. Besides size, come up with other pathological model characteristics that make it choke. A bit of Code Golf.

Here is a puzzler for you:

require(rstan)

a=10; b=20; c=3; N=100

x=rnorm(N, 100, 30)

y=(a*x+b-c)/sqrt(b*c) + rnorm(N,0,10)

STANmodel = ‘

data {

int N; real x[N]; real y[N];

}

parameters {

real a; real b; real c;

}

model {

for(i in 1:N){

y[i] ~ normal( (a*x[i] +b – c)/ (b*c)^.5 , 10);

}

}’

fit=stan(model_code = STANmodel,

data = list(N=N, x=x, y=y),

iter = 1000, chains = 3, warmup=100)

fit=extract(fit)

par(mfrow=c(3,2))

plot(fit$a, type=”l”); hist(fit$a)

plot(fit$b, type=”l”); hist(fit$b)

plot(fit$c, type=”l”); hist(fit$c)

res=cbind(rbind(median(fit$a),a),rbind(median(fit$b),b),rbind(median(fit$c),c))

rownames(res)=c(“Estimated”,”Actual”); colnames(res)=c(“a”,”b”,”c”)

res

Output:

http://s11.postimg.org/68peiww5d/stan.png

> res

a b c

Estimated 108822703 5002033 7058593

Actual 10 20 3

By the way, is there a correct way to embed code in these comments? I have no idea how the above is going to show up.

It’s easy to come up with a minimal model that makes Stan choke, it’s just not much fun because it would make everything else choke too (e.g.- x ~ student_t(….) with low df). It’s about as much fun as making as C program sefgault.

Stan is the honey badger of Bayesian statistics, simple as that.

Not sure why you would infer anything about the quality of the folks working on StataStan from what I had said previously. Like you said, the development of Stan is inherently integrated in the research you conduct. So, it would make sense that after the initial development there would be significant time invested in optimizing the existing code base in addition to adding new features and functionality. Sure we can’t price out the true cost of Stata’s development in this area, but given the nature of the software I would feel comfortable guessing that to date they’ve invested less in this component of their system compared to the amount of time and effort that you and your colleagues have put into Stan. While JAGS and BUGS may be significantly more mature than Stan (with regards to the software development), comparing a first production iteration of some software (in this case a component of the software) to a program that is several years into development will almost definitely show performance differences that would typically favor the more mature software. To me the bigger “punchline” is how well the program performs compared to JAGS. With regards to cost, that could also be heavily skewed depending on the seniority and/or individual salaries tied to given positions (I’m fairly certain some of my developer friends make a significant amount more than the average developer with similar experience working on statistical software). I would think comparing performance based on the major release numbers might be a bit more normalized given each release would represent what the developers believe to be a stable release with significant updates/upgrades to their software.

Billy,

That’s all fine, but I still stand by the headline of this post. StataStan will definitely improve in the future, and Stata’s native Bayes implementation might improve too. I see no reason for anyone to use a slower, less scalable, and less flexible system. StataStan exists now and does the job.

Hi Andrew,

It wasn’t anything about the headline as much as the punchline reference. I completely agree that there is no reason for anyone to be bound to a less performant system (particularly as compute power is no longer growing at the rate it once did). If I used C/C++ at all I would potentially even try building a more direct interface (http://www.stata.com/plugins), but I know that is well beyond my skillset (although it might be something that Sophia Rabe-Hesketh and the other folks who developed gllamm might consider).

Billy:

I really hope the Stata people do this. I have a lot of respect for Stata users and I’d like them to be able to move into the 21st century when it comes to Bayesian inference.

Hi Andrew,

I agree. I’m orders of magnitude less experienced as a programmer compared to the Stan contributors and developers at Stata and think the integration would broaden the capabilities in general (e.g., provide users with an option to use the current implementation or the Stan implementation in case they want to do their own simulations/comparisons on retrieving parameters with the different algorithms being used, etc…). Would you or any of the other members of the Stan team know if Stan has been successfully compiled using different compilers? The Stata plugins page references a different compiler used for building Windoze-based plugins (the Borland compiler), but not being a voluntary Windoze user I wouldn’t know whether and/or how much of an issue this might be for consistency across platforms; I imagine the R interface is compiled with the gcc compiler packaged with RTools (or something comparable), but figured it might be good to know for us Stata users to be able to make the suggestion with a bit more forethought.

Thanks again,

Billy

Andrew, and Stan crew. You guys are doing a huge huge service to the world by building Stan. I’ve fit a wide variety of complicated models in Stan that I doubt I’d be able to fit any other way. The models are complicated because the situations were complicated, and so I would have had to resort to some kind of heavy approximation otherwise. Even if approximations worked fine, doing the model the way I think it should be done and getting results that I expect is hugely confidence building that I understand what’s going on. Keep it up! the future of good science is at stake!

Can you post any of these models online?

Mostly they’re done as consulting work, so I don’t think so.

But here’s a flavor: A hotel with 7 floors had a fire. There was fire damage and water damage.

Inspectors went to each room and rated the visual assessment of severity of the damages for every room on a 1-4 scale (with some anchor points described to them for consistency).

Then, a random subset of 20 rooms was investigated wall by wall, with samples pulled from the wall. Each wall was rated in each of the 20 rooms. Some data was missing and imputed using a dirichlet model for percentage of area damaged.

Then a cost estimator came up with a cost to repair each degree of damage (ie. a whole room consistently rated 1, 2, 3, or 4) depending on what kind of repair was needed. Then we did a nonlinear regression on the room-wide visual rating against the cost to repair the detailed investigated rooms estimated using the wall by wall ratings and the cost estimates per square foot for a given degree of damage, with measurement error models for the cost estimates and for the visual ratings, and imputation for the missing data where investigators didn’t rate anything. Ceilings were rated separately.

Then we applied the nonlinear regression to predict the cost in each of the other 120 rooms or so based on the room-wide assessment, with model error included. In the end we added up all the costs for each room and collected the total cost number, which was surprisingly stable and used in insurance settlement negotations.

I write it as if this were a serial problem… do this then that then the other thing… but in fact it was one big connected simultaneous Bayesian posterior model for the damages.

It imputed square footages in rooms that hadn’t been seen using a mixture model of an exponential, and a gamma distribution fit to data read off of floorplans. (some rooms had almost no plaster because they were already blown-out as part of a renovation before the fire)…

The problem had measurement error on the 1-4 scale for every room, had measurement error on the 1-4 scale for every wall that was investigated in detail… several parameters for the nonlinear function… imputed values at maybe 10% of the rooms, there were easily say on the order of several hundred parameters and say 300 data points. Running the model took like maybe 10-20 minutes on an old 2007 desktop machine which I’ve since upgraded.

In the end I felt like I could if needed walk into a courtroom and describe how we arrived at the number in a way that juries would believe, as opposed to a kind of hocus-pocus we could do with an approximation (ie. 20 rooms each cost x1,x2,x3…x20 to repair according to cost estimator… so extrapolate the average to the whole building). The fact of the matter was that damages varied spatially a LOT depending on where fire or water occurred so telling a jury that each room had been assessed and some information about that specific room used to estimate a cost would be more believable and in fact probably more accurate.

Did you compare the estimates to the later real costs? If so, how accurate were the estimated costs?

I can’t give details really, but it was more notional costs under a certain model that was required by the insurance contract. In the contract it specified they could recover the cost to return the building to its state prior to the fire, which was never going to happen, as they were in the middle of remodeling during the fire. No one in their right might would first fix it up back to the original condition, and then continue with the remodeling…

Suffice it to say that the “true cost” was always going to be an unobserved parameter under that requirement :-)

If you buy into probability as a generalization of logic, then the advantage here was that the model had the structure of a logical argument in which as much information as possible was taken into account, information for which there was photo-evidence etc, while still preserving realistic uncertainty and calculated in a way that the logical structure would be apparent and followable by a jury. The insurance contract practically requires that you do such a thing.

Bayesian models in forensics make a lot of sense, precisely because they are more or less generalized logical arguments for what the state of knowledge we have implies about the quantitative values of certain things we’d like to know. Which is exactly what is needed.

The logic behind “random sampling guarantees that the average over a sample is rarely very far from the average over the population when the sample gets big enough” doesn’t have the right flavor for a jury. Particularly when the opposing side could say introduce photos of some rooms where practically nothing had happened because they were far from the fire… and then claim that you’re applying an average there in an inappropriate way etc

Interesting, reminds me of work I did on predicting child neglect or more exactly that a judge would find for child neglect and allow the agency to intervene (I was hired by the agency). So _reality_ is less important than generating a shared view of reality.

Also crucial in an adversarial legal system is the ability of competing sides to readily anticipate the likely outcomes and you are suggesting a Bayesian approach helps in that regard.

@Keith. In many ways what I was trying to calculate was a specific counterfactual cost. With counterfactuals there’s never a verifiable “reality”.

There were a variety of ways to go, for example: cost to complete renovation, given the fire, minus cost to complete the renovation if the fire hadn’t occurred…. this difference could be thought of as the “added cost of the fire”. Since there were cost estimates for the pre-fire renovation, we could actually estimate this and then observe the real cost in the end, and see how well the model worked.

BUT: The contract didn’t specify that as the recoverable amount! The recoverable amount was the cost to return to the pre-fire state. When the fire occurs during normal operations, this is a reasonable thing to consider, since you might actually return to the operating state… but when the fire occurs in the middle of a renovation… it makes no sense.

But, and this is key, it’s still the quantity you have to estimate by contract. So, like you say, it’s important to argue that your method for doing the calculation is the one that gives a “good” estimate (ie. a shared model of reality). The logic behind the model is key, as that’s what the jury will have to assess.

Very interesting.

Here’s a different question: Just for fun, have you tried tweaking your model priors / structure to see (just for fun, of course) how much higher of an aggregate damage estimate you could get the model to churn out while still staying reasonable enough that a jury would buy it.

Or maybe, reasonable enough that even a smart statistician could not find fault in it.

A related (non-statistical) point: Does ones duty in legal consulting work allow one / require one to already submit a tweaked model that furthers the best interests of your client (i.e. max settlement monies)? I mean, is a paid consultant for the hotel expected to act differently than an amicus curae. How is a consultant supposed to act in an adversarial legal system?

There are definitely problems with many consultants. Lawyers learn which firms will say what they want for money.

I don’t think that’s a reputation to be desired, but some firms have made a lot of money doing a variation on that. Typically it requires that you very narrowly craft your output, so it’s not technically wrong, it’s just not really relevant to the truth. An example might be to walk around a complex and find where there is water staining, and then test only the windows you found with stains… and then declare that 100% of the windows you tested failed, without revealing how you chose them.

My take on all of it is to do the best job I can of assimilating information into a coherent viewpoint, so that the client knows what can be shown with evidence. I leave it up to the lawyers then to decide what to introduce and what not to. Certainly things I come up with might not be helpful to the client’s point of view. Anything that might help the other side, we are not obligated to do their job for them, to point out what they should have analyzed etc. So typically I think if I discover something that would help the other side the lawyers are free to use that knowledge to steer the arguments away from that stuff… But I don’t alter my conclusions.

As for your question about how easy it is to bias the estimates. What I did was try to put in wide priors that would allow a lot of wiggle room, and then see where the result landed. It always landed about the same place. I didn’t then go in and try putting in priors that were narrowly constructed to help the client’s viewpoint to see if I could force that. I suspect an opposing statistician would easily find such issues because it would require heavy bias.

People did ask questions like: “what if the cost estimator’s estimate to fix a heavily damaged room wasn’t right? What if it was too low by 10% or so?” and the answer usually came back that there was already measurement error built in, so I would expect that kind of change to alter the total cost within the high probability range of the output distribution… in other words, the high probability region already had that kind of smallish errors built it.

And, if I understand the situation, this was pre-trial with prejudice to avoid going to trial if avoiding full combat looked like a better option.

Interesting work, at least if you are working with talented lawyers.

At a jury trial how much of this does a typical jury understand? You can get talented lawyers, but looking at the awareness / education of a typical American, how often do you get a talented jury?

After a fitted IRT model one usually studies plots of item characteristics curves, item information, test information etc.

In guess in Stan you would calculate them by hand. In Stata it is literally 3 lines of code. I guess that’s what the Stata team sees as value added of their implementation *within* Stata…

User:

Indeed, this is the sort of contribution that makes a lot of sense to be in Stata. My point is that the Stata people can easily implement these plots without programming their own, inferior, Bayesian engine. Indeed, it they use StataStan for their engine (which they can do easily and for free), this frees up their resources for more useful features such as plots of item characteristic curves etc.

That’s our idea for Stan (and StataStan, etc): we have a fast, flexible, and scalable program for Bayesian inference which yields posterior simulations that can be useful in a variety of ways. Then the Stata crew can add their value by writing a front end that allows specifications of certain sorts of models in a Stata-like syntax, and they implement things like item information that will be of interest to their users.

Why is it a given that the Stata team’s implementation of a Bayesian engine will be necessarily inferior?

The name bayesmh suggests Stata’s engine is based on a random walk Metropolis-Hastings, so it was very likely that Stan’s HMC sampler would outperform it. Nevertheless is wasn’t a given — that’s why Grant et al. went to the trouble of verifying that Stata’s engine is, in fact, inferior.

More importantly, how would anybody know? Maybe they already use Stan internally but nobody bothered to tell the Stan team, I think the Stan license allows for that)

They’re not that secretive! Check out their doc:

http://www.stata.com/manuals14/bayesbayesmh.pdf

It’d be great if they used Stan internally.

It’s going to be hard to compete with Stan if they’re relying on only MH, they would definitely get more mileage out of joining in on StataStan!

Krzysztof,

And of course they could copy our code and re-implement NUTS and all our autodiff, but what would be the point? It would make more sense for them to use already existing Stan as their engine, and even work with us to make Stan better (which would thus make Stata better, because StataStan) and then devote their efforts to write a translator which would allow users to express Bayesian models in Stata-like syntax which would then run internally in StataStan. A win all around. For Stat to write their own inferior Bayes engine: not such a win.

Andrew: I agree, I’d love to see more company driven user interface efforts. I don’t think they could easily beat Stan in the Bayesian inference niche but they sure could put more effort into the user experience than we can.

Hi Bob,

I got the impression that there is a bit of apprehension to using external source in the company’s code base. I brought up using some of the C/FOTRAN libraries that Brennan and Kolen had developed for linking/scaling as a potential way to get a fast/potentially easier win. I assume that one major reason is that version numbers aren’t quite like a Major.Minor or Major.patch versioning, but probably something more analogous to a Minor.patch versioning. It is fairly rare for any of the existing code base to be broken and they do make significant efforts to ensure backwards compatibility as much as possible (there are still tons of user-written programs that were written for versions 6-8 that I am still able to run without a problem on version 14). So, perhaps some of the apprehension is driven by what it would mean to have an external dependency that could potentially throw off their SDLC? I think pooling efforts is always good and would imagine that contributions would improve things for other users of Stan as well. I’d also imagine that if they were going to do anything, they would most likely want to link to the library and there might be other difficulties with passing/providing the data in a consistent way across the codebase. Just some thoughts – all highly speculative.

It would be a mistake to do a user interface that was generic like “runbayes…” but it could be a lot more transparent if you made your user interface specific to Stan, so “runstan…”. Then warnings could be made about backwards compatibility of future updates of Stan, and soforth.

I read some of these posts with interest. It seems as if the interface between Stata and Stan is assumed to be costless. Maybe it is for skilled programmers, but I have had major problems getting the various bits to work. I posted on as many blogs as I could, and nobody has been able to respond. I certainly respect the time and effort of the Stan folks and the StataScan programmer, but please do not assume that this interface works “out of the box” or even “with some assembly.” I do not doubt the algorithmic power of Stan in comparison to version 1 of the methods implemented in Stata. And indeed I have all of my models and data easily coded to run in Stan, so the Stan and StatStan syntax is elegant as well. But if one cannot literally run the software, then there is no point continually harping on how folks are being dullards and Luddites for sticking with Stata. Again, since blogs get misinterpreted quickly: this is a statement of my limitations, I am sure, at implementing the code behind the code. And I started writing out my own Idiot’s Guide to doing this, but stopped since I could not overcome several issues and could get no guidance (folks are busy, and this is boring stuff, I agree).

Glenn:

If you have any difficulties at all with StataStan, please post on the Stan users group immediately: http://discourse.mc-stan.org . We’d like to know these problems so we can fix them!