Jeff Lax points us to this news article by Carolyn Johnson discussing a research paper, “Firearm legislation and firearm mortality in the USA: a cross-sectional, state-level study,” by Bindu Kalesan, Matthew Mobily, Olivia Keiser, Jeffrey Fagan, and Sandro Galea, that just appeared in the medical journal The Lancet.

Here are the findings from Kalesan et al.’s article:

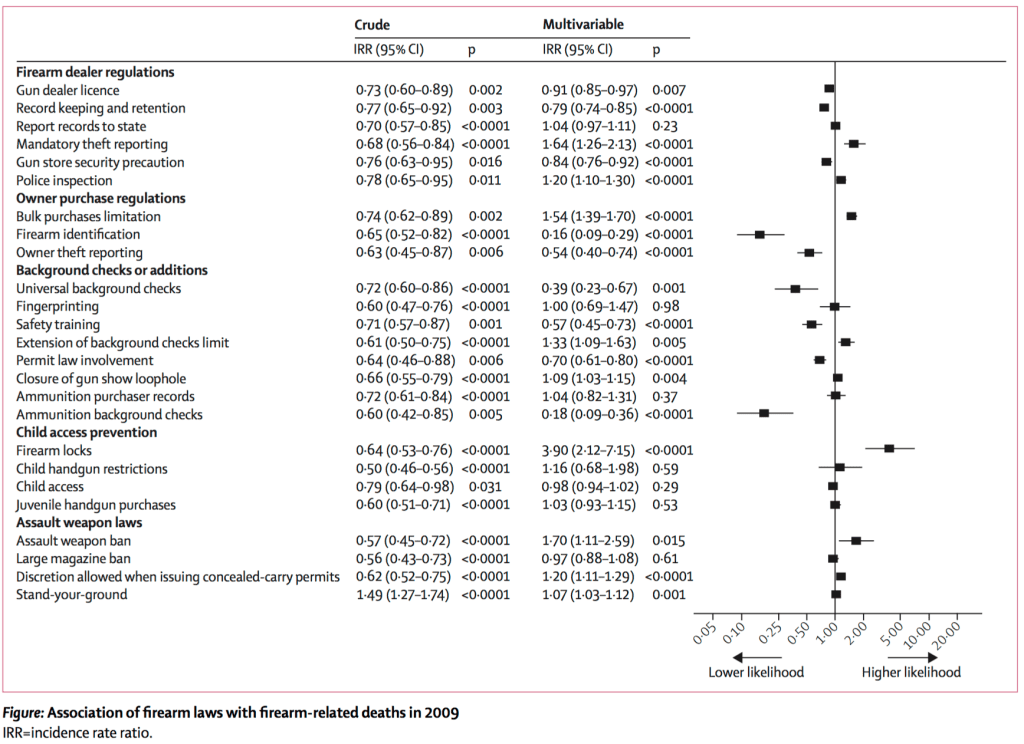

31 672 firearm-related deaths occurred in 2010 in the USA (10·1 per 100 000 people; mean state-specific count 631·5 [SD 629·1]). Of 25 firearm laws, nine were associated with reduced firearm mortality, nine were associated with increased firearm mortality, and seven had an inconclusive association. After adjustment for relevant covariates, the three state laws most strongly associated with reduced overall firearm mortality were universal background checks for firearm purchase (multivariable IRR 0·39 [95% CI 0·23–0·67]; p=0·001), ammunition background checks (0·18 [0·09–0·36]; p<0·0001), and identification requirement for firearms (0·16 [0·09–0·29]; p<0·0001). Projected federal-level implementation of universal background checks for firearm purchase could reduce national firearm mortality from 10·35 to 4·46 deaths per 100 000 people, background checks for ammunition purchase could reduce it to 1·99 per 100 000, and firearm identification to 1·81 per 100 000.

And here’s their key chart:

Johnson queried a couple of gun control experts who expressed skepticism:

“That’s too big — I don’t believe that,” said David Hemenway, a professor of health policy at the Harvard T.H. Chan School of Public Health. “These laws are not that strong . . .”

“Briefly, this is not a credible study and no cause and effect inferences should be made from it,” Daniel Webster, director of the Johns Hopkins Center for Gun Policy & Research wrote in an e-mail.

I credit Johnson for writing a skeptical story and her editor for giving a skeptical headline.

She also got this quote:

“What I find both puzzling and troubling is this very flawed piece of research is published in one of the most prestigious scientific journals around,” Webster said in an interview.

I too find it troubling when a very flawed piece of research is published in a prestigious scientific journal, but at that point I no longer find it puzzling. I accept that scientific journals—especially the most prestigious scientific journals—loooove the publicity. Here’s the Lancet’s home page right now:

As long as the politics are right (which will depend on the publication; what is suitable for the Lancet might be much different from what works in an econ journal) and there’s “p less than .05,” it’s all systems go.

But wait!

But wait one second. Is the Kalesan et al. paper really a “very flawed piece of research”?

Based on the news article and a quick glance at the paper, I’d say yeah, it’s a joke. A regression with 25 predictors and 50 data points? I mean, sure, nothing wrong with looking at the data but please don’t take this as anything more than vaguely suggestive. Just by putting it in a high-profile journal you’re giving the analysis more weight than it can bear.

But then I looked that author list more carefully . . . Jeffrey Fagan! Sandro Galea! I know those guys! Jeff is a collaborator of mine and, while I’ve never worked with Sandro, he always struck me as a legitimate researcher. Did they really sign off on this? Maybe there’s something I’m missing here. I’ll send them an email and see what they say.

P.S. I could’ve emailed Jeff and Sandro first before posting, but in this case I think it’s fairest for me to post first and ask questions later. Then if it turns out I really did miss something important, my earlier mistake will be out in the open.

“Some of the particulars of the study, however, are less clear. For example, the study found that ballistic fingerprint laws that require bullets to be able to be traced by guns had the third biggest effect on reducing overall firearm deaths and the strongest effect on preventing suicide death. Webster said that those fingerprinting laws aren’t even currently being implemented, raising the question of how they would prevent gun deaths — and particularly in suicides where tracing the bullet to the gun hardly seems like a deterrent. Kalesan said that that the laws would result in fewer guns, and said the study wasn’t designed to distinguish how policy contributions to suicide or homicide deaths.”

You beat me to posting that damning quote.

This paper seems to be just brimming with type-M errors (magnitude of effect incorrect).

Perhaps the coefficients were enhanced with rho-gain

http://www.gocomics.com/theargylesweater/2016/03/11

(link to a cartoon)

Not a single regularization or multiple-comparison correction method was used that day.

Do you mean Benferoni correction?1

The Bonferroni method(s) are just one among many methods to adjust for multiple comparisons. There are also the false discovery rate methods. I also mentioned regularization, which is the use of prior information (in the form of a prior distribution or a penalization) designed to avoid over-fitting and occasionally force sparsity in a model.

At the risk of commenting on an article before reading it, the results strike me as self-refuting. It is not credible that the reported positive associations between some firearm laws (eg, firearm locks) and firearm deaths imply a causal direction from law to death rate. Why then should we believe that the negative associations found for other laws are causal?

You’re not going to address this question in a lab-based RCT (or any other sort or RCT). But it is still important to assess what evidence there is for the effectiveness of legislative policy for a problem like gun-deaths. And they’re not p-hacking or -forking by just reporting the laws with big effects as if they were their a-priori interventions of interest: they give the effects for all the 25 laws they had info on.

It has to be taken as exploratory and tentative, but it is good to report what you can get out of the evidence, if it’s the best evidence available. I’d fault them for not getting much longer time-series and modelling it accordingly, but not for trying.

Brendan:

I do think it’s good they graphed all 25 coefficients. In that way they followed good practice. But that doesn’t mean that anything useful can be learned from these results. We can see how the authors of this paper respond to their critics.

Methods section at the link: They gathered some cross-sectional data, and then they ran a Poisson regression. Um. It’s not worth going deeper, I think, but it sounds like a failure to “cluster one’s standard errors” at the proper level. Here’s my conjecture: I think they did (1) when they should have done (2).

(1) If murders drop from 500 one year to 450 the following year and you assume that murders arise according to a Poisson process with a rate that depends on the state * the current laws, then you’d likely find a very statistically significant drop in the murder rate. You have a lot of data points! 500 in one year and 450 in the next! And the only thing that changed was the law, so you’d pin the difference to the legal change.

(2) But what if there are other state*year effects? What if yearly murder rates go up or down by 10% in a given state all the time even when there aren’t legal changes? You’d want to treat each state-year as a single effective data point, not as 500. (And also control for lots of trends, etc.)

Of course, if you did it that way you’d realize that you only have a couple effective data points per experiment, and that your paper couldn’t possibly tell you anything.

Is this one regression with twenty five predictors and fifty observations or is it twenty five regressions with one predictor each and no multiple comparison adjustment?

Both, I think.

Some kind of mixed model?

It would be interesting to see time series for states with these laws relative to the national rates.

Yes, it would be interesting to see if we could tell the difference in unlabeled time series.

The Swedish chef says, “Mmm, fork, fork.”

It’s astonishing how the invited commentary makes it clear there are major issues in the analysis, and yet it still gets a) through peer-review and b) front-paged on the Lancet site?!

This quote from the news article is interesting:

“But Webster took a different perspective, noting that any research on the effects of gun control policy can be politicized and that a high-profile study that is flawed but in favor of gun control laws could shake people’s faith in the science and fuel critics to question the study’s ultimate conclusion that gun control works. He said he frequently finds himself explaining to policy makers and the public that they should be cautious in accepting research that hasn’t been peer-reviewed and published in a journal.

“What I find both puzzling and troubling is this very flawed piece of research is published in one of the most prestigious scientific journals around,” Webster said in an interview.”Something went awry here, and it harms public trust.””

It looks like the strategy of holding up peer review as a mechanism for revealing the truth has failed.

Did I read this correctly in the figure? According to this article there is almost 4 times higher chance of gun related mortality if there are firearm locks as opposed to no firearm locks? To me this sounds like a huge advance to science. I am going to put my gun in a perfectly visible place in my kid’s room :)

It depends on how the contrast coding was done. The authors probably coded locks present as 0 and locks absent as 1, or -1 and 1 respectively (I hope they used sum-to-zero contrasts). I can’t check in the paper as it is behind a paywall.

Sorry I don’t know anything about US state regulations. Is it possible that firearm locks are a piece of substitute legislation that states enact when they oppose stronger anti-gun laws?

I’m coming from the assumption that I would expect some positive effect from some of these laws (even if not as great as shown) – but the spread of data both positive and negative puzzles me.

Stephen:

No need to be puzzled. A regression with 25 predictors, really it’s going to be impossible to interpret any of these coefficients.

“Is it possible that firearm locks are a piece of substitute legislation that states enact when they oppose stronger anti-gun laws?”

Not really.

If you compare the states that have safe storage or firearm lock laws here:

http://kff.org/other/state-indicator/firearms-and-children-legislation/

With the Brady Campaign’s state grades: (higher scores mean stronger gun control laws)

http://www.bradycampaign.org/2013-state-scorecard

You get this list:

Rhode Island B-

Pennsylvania C

Ohio D

New York A-

New Jersey A-

Michigan C

Massachusetts B+

Maryland A-

Illinois B

Connecticut A-

California A-

So most of the states with safe storage or firearm lock laws (8 out of 11) also get a B- or better grade for strength of gun control laws overall.

and if you go by population, just CA, NY, IL are a huge fraction of the US, that full list put together is probably what 50% of population or more?

I’m not sure what makes a researcher “legitimate,” but Galea’s CV speaks for itself. His gifts when it comes to the academic publication contest are extraordinary. Maybe once in a generation. When he travels to give talks, he packs rooms and has people standing outside doors craning their necks to see what he’s saying. On any externally verifiable metric, he’s extraordinary successful. I gather that “successful” and “legitimate” are not the same thing on this blog, though, because other successful researchers have been singled out by name as not being “legitimate.”

I’d humbly suggest that rather than focusing on individual personalities and discussing whether they are “legitimate” or not, this thought-leading blog might do more to take a systemic perspective, and ask whether what determines “success” and what identifies “legitimacy” are misaligned in the current institutional structure. Then if they are, maybe it’s time for someone with Andrew’s extraordinary eloquence and stature to start advocating for reforms that improve that alignment a bit.

The Lancet article is some of the most ridiculous results-oriented garbage ever to be published in a scientific journal, and more evidence that physicians and medical journals are incompetent or dishonest when it comes to criminology and gun control. The study compared firearms death rates in the 50 states, performing multiple regressions on “29 state-specific independent variables: 25 state gun laws in 2009, rates for non-firearm homicides, gun export, a proxy for household gun ownership, and unemployment rates in 2010.” Somehow they forgot to consider race, even though race all by itself explains over 65% of the states’ variation in homicide rates. But of course, they didn’t forget race, as their article notes “higher firearm mortality rates occurring among black people than white people” – they just chose to pretend that race had no significant effect on state-level variance in homicide rates.

Because they neglected what is probably the most important demographic factor, and because race and region correlate with gun laws, all of their claimed associations between gun control laws and firearm death rates are meaningless. Further, when you perform a multiple regression with relatively so many independent variables (25 variables for 50 data points), you make spurious correlations all but certain. These problems are readily apparent when you consider that the study showed a 99.99% chance that requiring firearms trigger locks increases (yes, increases, with P < .0001 and a midpoint increase by a factor of 3.90) firearms deaths – one might think that trigger lock requirements are helpful or that they are insignificant, but this is an absurd result. There is no reason to think that the study’s claims about decreases in deaths due to three other laws are any more real.

Using the 32,000 number for 2010 gun related deaths indicate that they consider suicide along with the mass killer and the drug cartel. The three groups are about as far apart as you can find. So, when a study combines these groups, it has no possibility of finding reasonable solutions to the issues.

Suicide is certainly an issue that affects many of us and it certainly is more complex than the rest. But in any case, not sure that “laws” should be involved in the understanding of suicide.

The mass killer is another group not really affected by any such law in that they have a plan, spend months or years obtaining the materials and have usually only one short violent episode. So, without prior bad acts, it is very difficult to stop prior to the first bad action. They also have a wide variety of other methods that do not involve firearms.

For the “violent criminals”, there are lots of law issues where changes in law could make a big difference in the threat to the law abiding person from this group. However, my opinion that laws that limit firearms do not effect this group much and certainly can be shown to create more helpless victims.