After three years, we finally have an updated version of our “EP as a way of life” paper. Authors are Andrew Gelman, Aki Vehtari, Pasi Jylänki, Tuomas Sivula, Dustin Tran, Swupnil Sahai, Paul Blomstedt, John Cunningham, David Schiminovich, and Christian Robert. Aki deserves credit for putting this all together into a coherent whole.

Here’s the abstract:

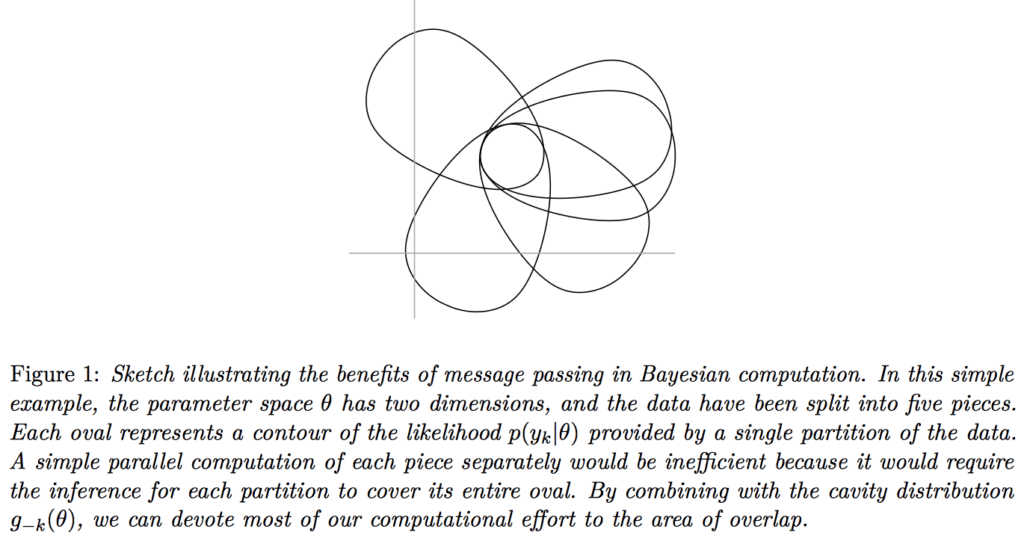

A common approach for Bayesian computation with big data is to partition the data into smaller pieces, perform local inference for each piece separately, and finally combine the results to obtain an approximation to the global posterior. Looking at this from the bottom up, one can perform separate analyses on individual sources of data and then want to combine these in a larger Bayesian model. In either case, the idea of distributed modeling and inference has both conceptual and computational appeal, but from the Bayesian perspective there is no general way of handling the prior distribution: if the prior is included in each separate inference, it will be multiply-counted when the inferences are combined; but if the prior is itself divided into pieces, it may not provide enough regularization for each separate computation, thus eliminating one of the key advantages of Bayesian methods.

To resolve this dilemma, expectation propagation (EP) has been proposed as a prototype for distributed Bayesian inference. The central idea is to factor the likelihood according to the data partitions, and to iteratively combine each factor with an approximate model of the prior and all other parts of the data, thus producing an overall approximation to the global posterior at convergence.

In this paper, we give an introduction to EP and an overview of some recent developments of the method, with particular emphasis on its use in combining inferences from partitioned data. In addition to distributed modeling of large datasets, our unified treatment also includes hierarchical modeling of data with a naturally partitioned structure.

The two graphs at the top of this post indicate the basic idea, and this graph shows the wall-time win:

P.S. Tian was aware that I was going to post on this paper so she sent along this image which illustrates the divide-and-conquer principle:

That last figure violates Gelman Principle 1, which is to always have an italic caption. What’s a site? How many processors? Are they all on the same board are are they distributed? And what is the computation whose time is being measured?

A link to a list of all the Gelman Principles please.

I don’t think Andrew keeps a list. If he did, most of the entries would be about tick marks, spacing, and putting labels on lines rather than in a legend.

I did glance through the paper and realize that they use “sites” for the factors in the density. The sites can be parallelized. Each site’s computation is expensive, requiring a sample to be drawn from the tilted distribution using MCMC). So I think the graph assumes that you have 30 cores, one for each site.

One issue they note arises with increasing number of sites (smaller mini-batches of data, usually) is that the approximations suffer. In other words, the increased parallelism comes at the cost of reduced accuracy. What’s not clear with these approximate methods is how much accuracy is lost and under what conditions and whether there are biases to watch out for.

We use sites to distinguish from factors in a density. For example, in the top-down view of scaling to large (exchangeable) data, there might be N factors, one for each data point. You would do computations over K sites which partition the N factors in various ways. In the bottom-up view, each site might correspond to a group of factors analyzing one data set.

The paper says on page 3: The factors $latex f_k(\theta)$ together with the associated approximations $latex g_k(\theta)$ are called sites.

The confusion arises because “factor” is ambiguous—each site-factor $latex f_k(\theta)$ in the density might be made up of a product of lower-level factors (e.g., the site $latex k$ might cover a minibatch of data, each contributing a factor to the overall density).

I agree. (If you read the literature it’s even more confusing :)

They’re British Blue Shorthairs. The cutest breed ever!