As I wrote a couple years ago:

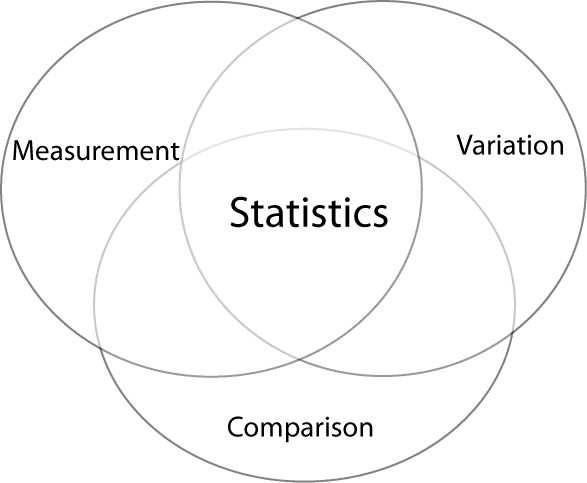

Statistics does not require randomness. The three essential elements of statistics are measurement, comparison, and variation. Randomness is one way to supply variation, and it’s one way to model variation, but it’s not necessary. Nor is it necessary to have “true” randomness (of the dice-throwing or urn-sampling variety) in order to have a useful probability model.

For my money, the #1 neglected topic in statistics is measurement.

In most statistics texts that I’ve seen, there’s a lot on data analysis and some stuff on data collection—sampling, random assignment, and so forth—but nothing at all on measurement. Nothing on reliability and validity but, even more than that, nothing on the concept of measurement, the idea of considering the connection between the data you gather and the underlying object of your study.

It’s funny: the data model (the “likelihood”) is central to much of the theory and practice of statistics, but the steps that are required to make this work—the steps of measurement and assessment of measurements—are hidden.

When it comes to the question of how to take a sample or how to randomize, or the issues that arise (nonresponse, spillovers, selection, etc.) that interfere with the model, statistics textbooks take the practical issues seriously—even an intro statistics book will discuss topics such as blinding in experiments and self-selection in surveys. But when it comes to measurement, there’s silence, just an implicit assumption that the measurement is what it is, that it’s valid and that it’s as reliable as it needs to be.

Bad things happen when we don’t think seriously about measurement

And then what happens? Bad, bad things.

In education—even statistics education—we don’t go to the trouble of accurately measuring what students learn. Why? Part of it is surely that measurement takes effort, and we have other demands on our time. But it’s more than that. I think a large part is that we don’t carefully think about evaluation as a measurement issue and we’re not clear on what we want students to learn and how we can measure this. Sure, we have vague ideas, but nothing precise. In other aspects of statistics we aim for precision, but when it comes to measurement, we turn off our statistics brain. And I think this is happening, in part, because the topic of measurement is tucked away in an obscure corner of statistics and is then forgotten.

And in research too, we see big problems. Consider all those “power = .06” experiments, these “Psychological Science”-style papers we’ve been talking so much about in recent years. A common thread in these studies is sloppy, noisy, biased measurement. Just a lack of seriousness about measurement and, in particular, a resistance to the sort of within-subject designs which much more directly measure the within-person variation that is often of interest in such studies.

Measurement, measurement, measurement. It’s central to statistics. It’s central to how we learn about the world.

AG: “…but, even more than that, nothing on the concept of measurement, the idea of considering the connection between the data you gather and the underlying object of your study.”

I recently came across a 1996 JRSS A article* by David Hand entitled “Statistics and the Theory of Measurement” that I’ve been trying to digest. In it, he reviews three different theories of the connection between the data gathered and the underlying object of study (what he calls representational, operational, and classical measurement theories). Do you see one of these (or some other) concepts of measurement as being most useful in the sorts of applications you work on?

* http://www.lps.uci.edu/~johnsonk/CLASSES/MeasurementTheory/Hand1996.StatisticsAndTheTheoryOfMeasurement.pdf

The superb book by DJ Hand, “Measurement: Theory and Practice” sits on the shelf above by desk, so that I can easily grab it. For my money, the most important topic not taught in statistics classes is how to deal with real data, i.e., data that are dirty, have missing values, are not in the right form. But I suppose that’s a subset of measurement. Has there been anything on this topic since Pyle’s 15 year old book “Data Preparation for Data Mining”?

A big part of data prep for me was most efficiently done outside conventional “stat” tools. e.g. sed, awk & Linux commands like cut, tr etc.

I could suggest you a wonderful article by Hadley Wickham on Journal of Statistical Software where he provides principles of tidy data

http://www.jstatsoft.org/v59/i10

Yes indeed. I have worked on projects where the final analysis was essentially trivial in relation to cleaning the data and I don’t remember anything in my courses about this, other than perhaps looking for outliers.

Another serious problem is localism (provicialism)in the original data collection.

I recently saw a question about how to format telephone number in a spreadsheet. Sound simple until you go international and then it may get messy.

Times, London

General queries: Telephone: 020-7782 5000

Le Figaro, Paris

No idea what department

Tel : 01 57 08 73 00

Pravda (English) Moscow

+ 7 (499) 641-41-69

Globe and Mail, Toronto

General contact 1-416-585-5000

The Irish News, Belfast

No idea what department

+44 28 9032 2226

The Boston Globe

617 929 2000

What I learnt the hard way is that it’s far easy to combine entities than to later split an aggregate text entity into its sub-parts.

I wrote this, what a crazy festival of parsing. https://github.com/joomla/joomla-cms/blob/master/libraries/joomla/form/form.php#L1390

This book: http://www.amazon.com/Measurement-Theory-Practice-Through-Quantification/dp/0470685670/ref=sr_1_cc_1?s=aps&ie=UTF8&qid=1430247951&sr=1-1-catcorr&keywords=Measurement%3A+Theory+and+Practice+hand ?

Thank you! I can’t express strongly enough how gratifying it is to see posts like this. The detachment of statistical analyses from the question of measurement, which I see as treating any and every number as “data” regardless of how idiotic that may be, has been one of the most destructive forces in modern science and society. By ignoring the fundamental questions of measurement, we fall into the trap of pontificating about subjects we can’t even hope to understand and world building.

Can someone elaborate on the sort of things statistics contributes to measurement?

I always thought of measurement as super important & neglected but tended to focus on the nitty gritty of the physics / electronics / instrumentation involved. e.g. reducing sensor noise, calibration, instrument drift, non-linearity, least counts, amplification, zeroing against a real-time control, repeatablity, tracability of standards etc.

Statisticians are used to dealing with summary statistics of data, but every “measurement” is really a summary statistic for the Universe.

Not sure this answers Rahul’s question…but I agree (see also below).

After reading the comments, is the vagueness & lack of precision etc. more of a measurement issue or more of a broad social sciences malady? Something that isn’t measurement specific but endemic to the soc. sci. area?

Chemists, Engineers, Physicists etc. seem to already assign a lot of effort to measurement.

What I’d love to hear about is whether there’s statistical issues about measurement that physical sciences are systematically ignoring or getting seriously wrong.

For the most part, it’s the other way around. Measurement in the hard sciences is a much more serious topic, albeit a more hidden one. For example, I have an acquaintance whose PhD in physics involved calibrating a camera (a big one to take nano-level pictures), and he now has a (tenured) job helping with research that uses that instrument.

In many cases, it’s obvious in the sciences that you would want measurements that were as accurate as possible, and measurements which tie in with other measurements as neatly as possible (e.g. E = m c^2 only works if you measure E, m, and c in the right units, otherwise you’d need to have parameters in the equation).

In the business world, there are usually straightforward metrics such as “sales”, and those defined by operational definitions (GAAP) such as profit. These help anchor the fuzzier aspects of measurement in business contexts.

Not so quick about the business metrics! “Sales” is not straightforwardly measured. When revenues can or cannot be recognized is only one of many subjective decisions. There are lots of games being played despite the existence of GAAP rules. Channel stuffing being a very simple one.

Even counting subscribers is not as accurate as one thinks, if we are talking about millions of online accounts, for example.

Kaiser is right. Business schools give an entire department over to measurement–Accounting. It’s serious intellectually— how to define Profit or even Sales is by no means straightforward (what if you haven’t received the clients money yet, but it’s promised to you?, what if the contract is for delivery over several years on demand with no timing specified). And it employs lots of people, including auditors who go and physically look to see if assets on the books really exist or not.

As a measurement can be and are often constructed from other measurements, one can also turn this around and say that a summary statistic is really a measurement.

This opens the door to thinking about some aspects of summary statistics that are not normally discussed. Consider for example the decision whether to summarise a sample by using the mean or the median. Statisticians could associate these to the normal vs. Laplace/double exponential distribution, perhaps the central limit theorem, and could come up with robustness considerations. From the point of view of the theory of measurement (Stevens style) one would probably note as the first thing that the mean is not admissible for ordinal data whereas the median is. Another neglected issue, however, is how well mean and median work as “measurements” of a sample or distribution location, which depends on the use that is made of this measurement. From this perspective, one argument for the mean is that it is directly connected to the sum, and the sum may be of direct importance (such as the average amount people spend in a supermarket, which is directly related to what the supermarket takes in); on the other hand the mean as a summary of a skew income distribution may not be seen as suitable if it is dominated by a small number of rich people.

Just to add some thoughts to what you’re saying here as well as some other comments. There are two general issues: (A) bad measurements and (B) measuring the wrong things. In the long run (B) is many orders of magnitude more important than (A). My comment about every measurements being a “statistic” of the universe was really about (B). Two key questions are related to what Hennig is saying:

(1) Given data x_1,…,x_n, why is the mean usually a better statistic than say f(x_1,…,x_n)=x_3 ?

(2) Even when the mean is a good statistic to use why do we sometimes need to supplement it with additional statistics?

The answers to those questions are directly relevant to why some things are better to measure than others and why we sometimes need additional measures even when we’re already measuring something important.

zbicyclist brought up the example of the business world and the use of GAAP in Accounting. This illustrates my original comment in a particularly direct way. All accounting measures such as “EBIDTA” are literally “statistics” of the raw accounting data, but they are also measures used to understand, predict, and control the world.

In practice, it’s very difficult to choose the right accounting measures, they change over time and can change radically depending on the they of business or what is going on. If you give two analysts the same accounting data they will produce different measures with different values typically.

Note firms generally have two accounting systems. One they use for tax and regulatory purposes which is filled with lots of arbitrary conventions and rules (GAAP). The other they use inertially so that senior management can make key decisions and understand what’s really going on with the company. The later is trying to “measure” things in a useful way and in a sense is a more “accurate” reflection of state of the world.

1) Reliability and validity are sometimes formalised in statistical terms, i.e. as correlations between different measurements of the same thing, or between measurement and “truth”, and it is therefore a statistical issue to estimate them (although this general approach to these concepts can be controversial). In any case, statistics plays a role for assessing and calibrating measurements.

2) If measurements are to be analysed using statistical methodology, not only can measurement issues (such as whether measurements are ordinal or of limited value range) inform the choice of statistical method, also can knowledge of the statistics to be applied inform measurement; e.g., often proneness to outliers can be avoided to some extent by the design of measurement, and some measurement procedures introduce unnecessary dependence between different measurements, which could be avoided. Also, it is often an important issue to what extent one should attempt to produce an interval or ratio scale, which is good for much statistical methodology but may often not be appropriate for the measured phenomenon and/or introduce some artifacts.

3) As mentioned elsewhere in this thread already, a big issue in the interplay between statistics and measurement is whether the measurement is suitable for addressing the issue the researcher is interested in, and for granting the conclusions that researchers would like to make from the outcome of statistical methods. I have seen lots of situations in which I was asked to help with analysing data, but where my advice was that in order to address the research question one would rather need other data, i.e., measurements.

In my field measurement is certainly often ignored in beginner classes but quite often honours or graduate classes will go into it extensively because there’s a substantial ongoing effort to figure out how to measure psychological constructs.

To me the ignored topic is perhaps a little narrower, and certainly associated with measurement, representativeness. It’s the core unstated assumption of everything and yet very rarely questioned. We discuss several issues that certainly can lead to representativeness but representativeness on it’s own isn’t well examined or discussed maybe because it’s usually so bad that it’s embarrassing. I think this links directly to Andrew’s criticism of survey researchers and their insistence on “random” sampling.

Understanding what you ‘want’ to measure is almost a bigger problem than measurement. I’m dealing with it for infectious disease; but have some contact with education as well. The question of what an ‘educated person’ can do that an ‘uneducated person’ cannot is still unanswered. Until there is some sort of consensus answer for that question, measurement is not yet the challenge. In medicine, the equivalent question is “what health?” or “what is quality of life?”

I think that problem often arises in academia because we have a propensity to produce answers in search of a question.

Psychology has a rich history of serious research and thinking about measurement. But not everyone in our field knows much about it.

I don’t think there’s a single reason why. Part of it is training – in many departments you can get through your requirements with just a course in regression and ANOVA, no measurement.

Part of it is that people equate “theory” with positing relationships among psychological constructs (though they wouldn’t use that word), and consider that to be the more prestigious activity. They think measurement is not theoretical (because they haven’t had to read Meehl), and therefore it’s boring or grunt work.

And I suspect that an under appreciated factor is that much of the classic work in measurement used words like “test” and “item” because of the kinds of applied problems that psychometricians historically worked on. So even though the fundamental principles of reliability, validity, etc apply broadly, people get stuck on the language and don’t realize that it applies to what they’re doing.

P.S. For the psychologists following along, a friend shared this paper by Leona Aiken et al. documenting how PhD programs cover quantitative methods. Measurement is far from universally offered, and few departments require it. It is covered less often at “elite” programs than in the overall sample. The following quote stands out:

“An analysis of competencies in measurement raises grave concern about the most fundamental issues for adequate measurement in psychological research (see Table 7). Fewer than half of the respondents judged that most of their students could assess the reliability of their measures; only one fourth of respondents judged that most of their students could utilize methods of validity assessment. Only one fourth of respondents indicated that most of their students were competent at test construction or item analysis.”

http://psycnet.apa.org/journals/amp/63/1/32/

And this is doing pretty well compared to the forensic sciences [sic] which usually don’t have any believable studies of reliability or validity.

Or when they do, they are often incompetently applied probably due to inadequately trained personnel or a need for money in some bizzare situations.

Heck the last time I checked fingerprint analysis had a zero error rate.

The US NRC study on the polygraph makes is sound like the Keystone Kops are running the joint. The tone of bemused disbelief that runs through the report would be funny if it was not so serious.

How does external validity (transportability / generalisability) fit into this framework?

It seems like outside of the (rare?) cases where we’re dealing with random samples from a well defined population, then the issue is often dealt with informally by researchers, and textbooks don’t offer too much guidance.

Is this because generalisation is too closely tied to domain-specific knowledge of the problem under investigation, and so isn’t seen as part of statistics proper?

Brian:

External validity is part of measurement too! We talk about reliability and validity. On the research end of things, I like to handle this using multilevel models: the idea is that there’s some parameter vector “delta” that captures the differences between populations, and delta gets a prior distribution etc.

Great idea for a post!

I agree about the importance of considering measurement problems. We should point out that focusing on measurement errors and issues hopefully won’t discourage people from trying to measure things. I’ve been thinking a lot lately about cheating in college courses, and I really believe if we could just start measuring the extent of the problem in some reasonably intelligent way, it would be a great first step toward its solution. Do you have any thoughts about cheating? I’d love to hear them. My sense is that the University has an incentive to ignore the subject entirely and pretend it doesn’t exist, and individual professors definitely have strong incentives to ignore cheating because it takes an incredible amount of time and effort to prevent and there is no reward for the professor at all. So, we all pretend it is not a big problem and not that widespread. I think we should be surveying students about it, or somehow measuring it some way. And if we could measure it, then we could reward professors or departments for decreasing it.

Changing the subject, Wing Wong is speaking at UCLA today and I plan on quoting you, Andrew, though I’m sure you don’t remember saying it. When I got my job at UCLA you congratulated me and said something like “UCLA is a great department! Actually the only person I know there is Wing Wong, but any department with Wing Wong is automatically a great department.”

I love this post. However, I have a different explanation for this than “measurement being tucked away.” Personality psychology has a long history of being concerned about measurement. In many departments, personality psychologists used to teach measurement courses (a few still do). However, the war against personality, beginning around 1968, pretty much killed off personality psychologists (and their programs). Find me a department that takes personality seriously and I will find you a department that cares about measurement. Hint: there aren’t very many.

Ryne,

Do you think it is that psych-stats teachers used to assume measurement was covered elsewhere? Or that they just didn’t teach it because they didn’t feel it was part of psych-stats?

Dan

Dan,

I’m not sure I can answer your question. I believe, that for political reasons, personality psychology (meaning the people who study it seriously) was eliminated from training in psychology. It points to internal (inside the person) causes for human behavior and some people are uncomfortable with that.

-Ryne

I agree. I did my psych training in the UK, and certainly people’s views there on Hans Eysenck were quite polarizing (and for lots of reasons often unconnected with his views on personality).

Couldn’t agree more with this post. ‘Measurement’ is one of those concepts/topics that’s sneakily very subtle and for me has slowly moved from implicit in a lot of how I think about problems to much more explicit. As might be expected, much of the best thinking about this seems to me to come from the physics literature and is probably one major difference between say the culture of physics vs applied math (and stats?). I think this might be because it’s both quite abstract and very concrete and gets at some core issues of what a ‘theory’ or model is. PS I’m not a physicist! And no stereotypes Tobe taken too seriously…

I understand (hear) that on the theory side, psychometricians are like 5 decades ahead of the rest of us in terms of measurement in the social sciences – even if some of that has been forgotten/ignored. But I’d say the Public Health people take (at least one aspect of) measurement seriously. From a recent methods section on maternal and infant health:

“All anthropometrists were trained and methods were standardized at the beginning of data collection and thereafter periodically using methods described by WHO (20).At each of the … visits, trained anthropometrists measured maternal weight to the nearest 0·1 kg … height to the nearest 0.1 cm …, and mid upper arm circumference (MUAC) to the nearest 0.1cm… Following delivery (at home or hospital), anthropometrists specially trained for newborn anthropometry measured birth weight to the nearest 0.005 kg … length to the nearest 0.1 cm … and head circumference and MUAC to the nearest 0.1 cm.”

…Now that said, this helps not even a little bit with interpreting statistical analyses of these measures. I’ve seen people try to interpret the estimates of the effects of current food insecurity on child height, but child height can’t actually be affected by something that is very recent (kids don’t shrink). So I guess I’d say there are two big points in measurement: 1) measure the thing you are measuring accurately; 2) understand what exactly the thing you are measuring is measuring (in terms of what it means in the real world). I think the first one is one what most people think of as the statistical issue of measurement, but I think the second one is equally important and also a “statistical” issue.

Yes, both (1) and (2) are important — GIGO.

But also, (1) is not always possible — so discussing the degree of inaccuracy is also important; then that uncertainty needs to be propagated in thinking about uncertainty in results. (e.g., confidence intervals don’t take into account uncertainty in measurement).

“confidence intervals don’t take into account uncertainty in measurement”

They could though. I’m not saying people do it regularly, but they could. Cue “Stan can do that in…”

In contrast, my limited experience is that the biostatisticians who run the analysis will in fact correct point estimates for imperfect measurement (for example by adjusting by .005 if .01 is the accuracy). I think they do this because it is fiddling with observations directly (“correcting” them) and not specifying the statistical model. “Correcting” observations and “cleaning” data involve choices people have learned they have to make just to get to the statistical analysis. Whether or not to employ a new statistical technique your non-statistician colleagues don’t already know (and one likely to make your confidence intervals larger) is a choice that is very easy to punt on just by doing what you have (and everyone has) always done.

Confidence intervals don’t take into account measurement uncertainty because that’s not their job, but unfortunately a lot of people end up looking at a confidence interval and interpreting it as an estimate of measurement error.

Pull out a flexible tape measure and try it, then tell me that you really can measure someone’s bicep to the nearest 0.1 cm (what a stupid way to say 1 mm). Head circumference is maybe a little easier, since the skull is pretty rigid, but still the point is even though people say their measurements are accurate to 1mm it doesn’t mean you shouldn’t assign a measurement uncertainty of say 5mm.

Also, you might think “weight to the nearest 0.1kg” would be unambiguous, you strip off all your clothes and stand on an accurate scale and read off 78.2 kg right?

1) *Weight* is the mass, measured in kg times the unknown local gravitational acceleration, which varies from place to place on the face of the earth from about 9.78 to 9.83, or in other words just not knowing the g value makes your measurement less accurate than 0.1 kg for human scale measurements. http://en.wikipedia.org/wiki/Gravity_of_Earth … The gravitational acceleration varies systematically with say lattitude, which is also systematically varying on average together with say income (tropical regions have lower incomes on avg for example). So the fact that these people don’t even know the difference between a kg and a Newton shows us something about how measurement has a lot of subtleties.

2) Getting at your (2) from previous post, what even does it mean for *a person* to weight something? How much did the person eat last night, defecate this morning, sweat, drink, when did they cut their hair last, fingernails? Shave their legs? In other words, what constitutes “a person?”. While shaving your legs probably has no impact on measurement that would be of interest to public health studies of overall growth and health, certainly when and how large your last meal was has something to do with it!

I think your (2) is often the bigger issue, we measure something without knowing what it is we’re measuring, what it is we want to measure, in some circumstances without even knowing the dimensions of the thing we’re trying to measure (kg vs N).

An example of poor measurement:

From: John P.A. Ioannidis: Implausible results in human nutrition research. British Medical Journal, 2013; 347:f6698 (14 November 2013)

http://www.bmj.com/content/347/bmj.f6698

http://www.bmj.com/content/347/bmj.f6698/rapid-responses

“Nutritional intake is notoriously difficult to capture with the questionnaire methods used by most studies. A recent analysis showed that in the National Health and Nutrition Examination Survey, an otherwise superb study, for two thirds of the participants the energy intake measures inferred from the questionnaire are incompatible with life.”

Every column of data has a label and numbers. Due to measurement issues, the numbers may have unobserved perturbations, and the label may or may not accurately stand for what the numbers actually represent. And from one model to the next, depending on the other covariates, the numbers actually represent can change. But the label sits on top of the column, and it sits in every summary table, and it all looks so clear cut that it is easy to take for granted that it is what says it is.

+1

I think you are being too hard on the field of Education when you hold up tests as poor examples of measurement. I see far more awareness of measurement issues in my Educational circles than I do in my Statistical ones. Most of the people I know who are involved in PARCC (the group which is putting together the new Common Core assessment) care and think deeply about this issue. The new book I’ve just published (Bayesian Networks in Educational Assessment, with Bob Mislevy, Linda Steinberg, Duanli Yan and David Williamson, Springer) spends as much time on issues of measurement as it does on statistical models.

There are two reasons why the typical assessments used for our state accountability programs are so weak from a measurement perspective. First is that setting the standards is a political process, in the sense that different interest groups have different opinions about what a student should have learned by 8th grade. This means that standards are either vague enough that everybody can agree with them (student should know about common geometric figures) or specific enough to be tested but controversial (students should be able to calculate square roots of non-square numbers by hand). Second, the standards that are easiest to test are the ones we care about the least. For example, Science educators value Science practices (e.g., knowing what is an adequate control group for an experiment) more than Science fact (e.g., knowing how many planets there are in the solar system), but the latter are much easier to test.

Anyway, I and a bunch of others involved in educational measurement are starting a new blog on that topic at http://cognitionandassessment.wordpress.com/. Feel free to join in the discussion.

Russell:

I’m not trying to criticize the field of education; I do think they are serious about measurement. I’m criticizing the efforts that statistics professors make (or don’t make) to improve and evaluate their own teaching.

Andrew:

I would love to see a discussion of *how* we should be talking about measurement. What do I need to know, and what pieces should I read to learn about the issues?

I am particularly interested in this topic and have spent a lot of time thinking about it. There are couple of things that stand out to me as being important issues for the current state of psychological assessment. I agree with Russell that the Education field has spent far more time on the measurement issue than most applied areas of psychometrics and also agree with the ‘Catch 22’ they often find themselves in regarding the tradeoff between what we believe is most important and what can easily be assessed. What I wonder is whether our typical assumptions, even within the most sophisticated models, are realistic. A number of modern psychometric models currently adopt a realistic approach to what is ordered categorical data and apply logistic models and assume a continuous latent construct. However, are we actually dealing with continuous latents?

Denny Borsboom, argues that the only logically defensible philosophical position to adopt is that of realism (https://sites.google.com/site/borsboomdenny/papers) . Some have interpreted this as a blank check to make a realist assumption about any and every psychological construct one has imagined or will imagine and to in turn argue that they are real regardless of the sloppiness of the reasoning underlying such constructs as long as correlational ‘evidence’ can be provided in support of their reliability and validity. While a realist perspective is appealing and even necessary, one in which a ‘strong construct argument’ mapping the conceptual to events in the real world is required to create a robust science. Agnostic, weak, and unquestioning acceptance of constructs published in top journals has created a situation in which psychology is filled with sloppy and in many cases meaningless constructs about and from which unwarranted strong expansive and generalized inferences are made. It should not be enough to provide factor analytic results and correlational data against deficient criteria using samples of a few hundred and argue that it is proof of the ‘validity’ of a construct and its structure. Though this is exactly what you will find in the users manuals of many, many psychological assessments in all areas of psychology. I recognize that this is not a fair accusation about all psychometric work and overstates the state of affairs a good bit given that there is some very good work going on in the area.

That said, the reality of the human mind and its causal connections to human behavior is rather complex and assumptions that it is not has produced accepted methodologies that are not up to the task of providing realistic models of this reality.

I bet a large percentage of American male statisticians first got interested in statistics via sports statistics. Until fairly recently, baseball statistics were quite cut and dried with little need to worry about measurement. To a lot of American men interested in statistics, “The Baseball Encyclopedia” represents a Platonic heaven of statistics, and measurement issues seems like earthly dross.

I think there are a few issues, and things also depend a good deal on what you mean by covering measurement. And this then depends on what statistics course you are talking about. In sociology measurement is usually considered a topic for the research methods class not the statistics class, but thinking about measurement is a good opportunity to motive interest in/showing the usefulness of both topics. For example calculating inter-coder reliability in a content analysis is a vary practical application of correlation. Calculating the internal reliability of scales is also. Also, covering the use of randomized response suddenly makes pretty simple probability concepts very practical. Which brings me to the question about assessing statistics teaching/statistics education. Of course it depends which statistics course and which students, but in my case I’m really interested in the beginning course for students who are not statistics majors but who need to work with data. I think one of the ways you should be measuring the effectiveness of statistics courses is in looking at the extent to which students who have taken statistics can effectively use what they learned in another context. That context could be a methods class or it could be a “content” class. It could also be in reading the newspaper.

Of course one of the basic problems with saying you should add more to the statistics class is what that displaces. That said, by choice of what examples you use for concepts and for problems you can introduce some measurement ideas. I teach methods, but you could easily put some of what I do into a statistics course. For example, we look at the data from some of the experiments on question wording and question ordering and at lot of other measurement related topics using statistics.

Elin,

You make a good point about inter-rater reliability as being a good use of correlation and seems to be generally taught to most graduate students. What I think we spend far too little time on is the philosophical underpinnings of ‘content analysis’ and whether the conclusions we are drawing from the statistics are logically defensible real world phenomena of any importance. In psychology the Big Five personality constructs originated in a methodology grounded in a very strong assumption about language, descriptive language in particular, and the evolution and application of such and finally about what the correlation between words chosen as self descriptors by the same people mean along with their realist and causal position in the real world. Trait theorists assume that this ‘trait’ has causal power when in fact it has descriptive power. However, it is fairly rare to find applied psychological research that acknowledges this reality (see the work of Paul Barrett for a notable exception of someone who has moved from the former to the latter camp on trait theory).

The techniques and statistical models are important and essential to both teach and learn, but it seems a bit more time spent on the philosophy of psychometric measurement could be helpful in preparing future researchers to advance the field.

Yes, and that’s another reason why I wouldn’t attempt to push study of measurement into courses that have a primary focus on statistics. Of course discuss them, but it’s really a topic that needs in depth coverage of the theoretical underpinnings and application.

Agreed. Point taken.

Having Paul Barrett as my 2nd year ‘stats for psych’ lecturer (during his time here in NZ in the 90s) pretty much nailed the decision for me to switch to psych. There was something in his approach that was insightful – in hindsight i guess it was just the cohesive picture he presented of good science from.. philosophy around the things in the world we wish to study, quantification of same, design of measurement tools, implementation of method, to analysis made perfect sense me.

It seemed so obvious at the time: Philosophy and science are so heavily intertwined, if you wish to make meaningful statements about phenomenon neglect either at your peril.

Sadly a hefty proportion of scientists/researchers I’ve met over the years consider philosophy to be utter bunk.

p.s. the only negative about Paul Barrett was his close work and constant semi-sales-pitch with the point-click package ‘Statistica’. This led to many wasted years with Statistica, SPSS, Stata etc. Thank Stan for R!

In hindsight my stats-for-sociologists class at Harvard in the 80s was a waste of time (or worse!) because we were taught to P-hack in SPSS (I think it was). Basically the same thing happened when I cross-enrolled in the Probability and Statistics class at Stanford B School in the 90s — we were taught to hack regressions. My final project, which got a high grade, was such an embarrassment in retrospect.

Clyde Coombs’ Theory of Data and his other book on scaling were among the first stakes in the ground. After all, his theory of the four types of measurement scales is pretty much canonical (nominal, ordinal, etc.). If anyone has improved on this, I’d like to hear about it. To Russell Almond’s defense of education statisticians, I agree with him. Whether or not they’ve gotten it right — e.g., IRT, VAT or standardized testing — educational statisticians have more than paid lip service to psychometric issues. In fact, it’s one of the very few areas in statistics where there is still any debate at all. This wasn’t true 20 and 30 (or more) years ago when guys at Rutgers, Bell Labs or Dutch statisticians were busy developing multidimensional scaling routines. Routines which see unattributed application in the visualization of network analysis, etc. Who today reads the North American Classification Society listserve?

Where I think measurement challenges are in the most need of implementation is in the field of machine learning of unstructured text, image and voice mining. MLers, computer scientists, mathematicians, physicists, etc., are for the most part completely unaware of psychometrics and the urgent need for it. As a consequence, they can do some really crazy stuff but it’s like pissing into the wind when you bring it up with them. Hopefully, that will change.

Thomas,

It is in the “etc.” section of your list of canonical ‘measurement scales’ where the debates in psychology are properly focused. Bertrand Russell famously questioned whether psychological measurement was measurement at all. In much applied psychological work, numbers are assigned to responses to verbal or written questions. These numbers are added up and an interval scale is assumed so that mathematics that assume continuity and infinity can be applied. I think Steven’s success in pushing psychology into this mode of thinking has created serious problems within the field. A typical psychological assessment still adds up numbers applied to disparate statements and somehow calls it a continuous latent variable represented by an interval scale.

The good news is that there is a growing number of both academic and applied researchers challenging the status quo.

The fundamental issue you raise is related to “metatheory,” which is the theory of how theories are constructed. A critical component of metatheory addresses the nature and creation of “concepts” or constructs, and their measurement in theory creation. The following is a great reference no matter what field you are in: Zaltman, Gerald, Christian R. A. Pinson and Reinhard Angelmar (1964), Metatheory and Consumer Research, Holt, Rinehart, and Winston, Inc. You can find a copy on Amazon.com

I agree with this post. Measurement is often overlooked but really: what could be niftier than a process that converts a property to a number?

In all “probability”, you are “likely” correct.

This is the domain of philosophers of science/methodologists more than statisticians. But like anything in sci, all can contribute something useful. imo the strength of psych has been it’s close ties with philosophy in guiding these concerns (contrast this with the attitudes of the anti-philosophy crowd in physics like Feynman which has crept into physics across the board now – iirc a chance reading of Feyerabend’s Against Method soured his view of phil). Personally this is why i phd’d in psych and not physics (despite not being interested in ppl in the slightest). It seemed the only way to get a phil of sci hands on experience where uncertainty, discussion, improvisation etc were the norm, versus the absolutely certainty in method/foundationalism in physics (this certainty is illusory of course – and the rejection of foundational philosophy in physics imo can only be a longterm hinderence to physics).

C.S.Pierce is a great place to start reading (ie avoiding axioms/assumptions specific to a discipline when considering fundamentals around validity and measurement).

adding further ref for funzies, my Phd supervisor following in the footsteps of Peirce in his book “Investigating the Psychological World”. http://mitpress.mit.edu/books/investigating-psychological-world

I picked up that perspective studying semiotics (scientific approaches to linguistics) prior to statistics (also where I learned about Peirce.)

“The Statistical Analysis of Experimental Data” by John Mandel has a great section on measurement.