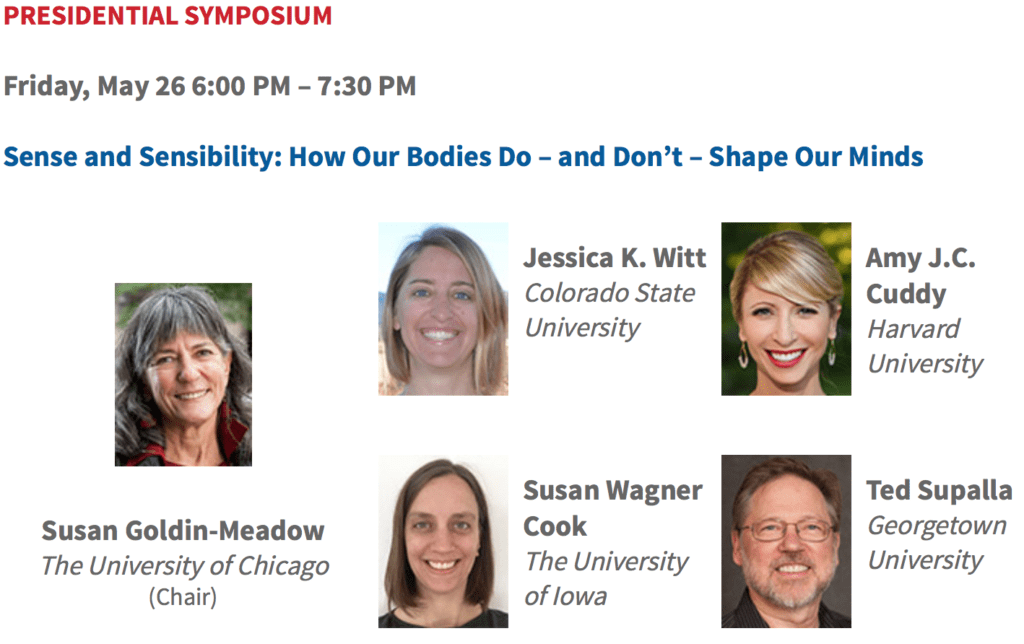

Someone pointed me to this program of the forthcoming Association for Psychological Science conference:

Kind of amazing that they asked Amy Cuddy to speak. Weren’t Dana Carney or Andy Yap available? What would really have been bold would have been for them to invite Eva Ranehill or Anna Dreber.

Good stuff. The chair of the session is Susan Goldin-Meadow, who’s famous both for inviting that non-peer-reviewed “methodological terrorism” article that complained about non-peer-reviewed criticism, and also for some over-the-top claims of her own, including this amazing statement:

Barring intentional fraud, every finding is an accurate description of the sample on which it was run.

This is ridiculous. For example, I think it’s safe to assume that Reinhart and Rogoff did not do any intentional fraud in that famous paper of theirs—even their critics just talked about an “Excel error.” But their findings were not an accurate description of their sample. Similarly with Susan Fiske and her t statistics of 5.03 and 11.14 which were actually 1.8 and 3.3. No intentional fraud, just an error. But the findings were not an accurate description of the sample. Or Daryl Bem’s paper where he reported various incomplete summaries of the data. Even if each summary was correct, his “findings” were not: they were selections of the data which, despite Bem’s claim, do not provide evidence of ESP. They don’t even provide evidence that the students in this particular sample had ESP. Or, if you want an even cleaner example, consider Nosek’s “50 shades of gray” study where Nosek and his collaborators themselves don’t believe that their findings were an accurate description of their sample.

Or, hey, here’s another one, a paper that claimed, “That a person can, by assuming two simple 1-min poses, embody power and instantly become more powerful has real-world, actionable implications.” This is the concluding sentence of the abstract. Actually, though, there were no measurements of power in that paper, and the reported finding was not an accurate description of the sample on which it was run. For it to have been an accurate description, there would’ve had to be some measure of power on the participants. But there was no measure of power, just feelings of power. Which I think we can all agree is not the same thing. No fraud, intentional or otherwise, just a plain old everyday journal article where the thing being stated in the abstract is not the thing that was done in the study.

I have no plans to be at this conference, which is too bad, as this session sounds like lots of fun. Maybe they’ll feature some of Marc Hauser’s famous monkey videos, and they can film it live as a Ted talk. The whole thing should be a real himmicane!

P.S. In all seriousness, do these people even read what they’ve written? (1) “Barring intentional fraud, every finding is an accurate description of the sample on which it was run”? (2) “Instantly become more powerful”?? (3) “the replication rate in psychology is quite high—indeed, it is statistically indistinguishable from 100%”???

Look, we all make mistakes, and there are also legitimate differences of opinion. The data don’t really support the claims that married women were more likely to support Mitt Romney during that time of the month, or that beautiful parents are more likely to have girls, or that Hurricane Missy will be more damaging than Hurricane Butch. But, sure, these hypotheses, and their opposites, are all possible. It could be that Cornell students have ESP, or that power pose makes you weaker, or all sorts of things. So even if I think people are overinterpreting their evidence and even being blockheaded in their interpretation of questionable data and in their resistance to other sources of evidence, I can see how they could make the claims they’re making. Daryl Bem may ultimately have the last laugh on all of us. But statements like (1), (2), and (3) immediately above: they just make no sense in the context in which they’re written.

Psychology is not just a club of academics, and “psychological science” is not just the name of their treehouse. It’s supposed to be for all of us—I’m speaking as a taxpayer and citizen here—and I think the scholars who represent the field of psychology have a duty to write clearly, to avoid false statements where possible, and to put themselves into a frame of mind where they can learn from their mistakes.

P.P.S. Maybe also worth repeating this bit:

I’m not an adversary of pscyhological science! I’m not even an adversary of low-quality psychological science: we often learn from our mistakes and, indeed, in many cases it seems that we can’t really learn without first making errors of different sorts. What I am an adversary of, is people not admitting error and studiously looking away from mistakes that have been pointed out to them.

We learn from our mistakes, but only if we recognize that they are mistakes. Debugging is a collaborative process. If you approve some code and I find a bug in it, I’m not an adversary, I’m a collaborator. If you try to paint me as an “adversary” in order to avoid having to correct the bug, that’s your problem.

P.P.P.S. In the first version of this post, I mistakenly labeled this session as being in the American Psychological Association conference. It’s actually the Association for Psychological Science. I apologize to the American Psychological Association for my error. It’s no big deal, though, it’s not like anybody’s being tortured or anything.

Antidote conference: http://www.bitss.org/events/bitss-2016-annual-meeting/

Whoa–this looks promising. I’ll try to watch some of it online.

Jrc:

I’m reminded of this classic bit from Douglas Hofstadter:

In the world of university publicity departments, NPR, Ted, Freakonomics, Gladwell, etc., Psychological Science and PPNAS count for a lot more than Retraction Watch and the Berkeley Initiative for Transparency in the Social Sciences. And of course the National Enquirer is still going strong.

I’m reminded of this classic bit* from Cormac McCarthy:

“In the dawn there is a man progressing over the plain by means of holes which he is making in the ground. He uses an implement with two handles and he chucks it into the hole and he enkindles the stone in the hole with his steel hole by hole striking the fire out of the rock which God has put there. On the plain behind him are the wanderers in search of bones and those who do not search and they move haltingly in the light like mechanisms whose movements are monitored with escapement and pallet so that they appear restrained by a prudence or reflectiveness which has no inner reality and they cross in their progress one by one that track of holes that runs to the rim of the visible ground and which seems less the pursuit of some continuance than the verification of a principle, a validation of sequence and causality as if each round and perfect hole owed its existence to the one before it there on that prairie upon which are the bones and the gatherers of bones and those who do not gather. He strikes fire in the hole and draws out his steel. Then they all move on again.”

In the world of people, politics, society, religion, science, etc., blind certainty and the vanity of one’s own importance count for a lot more than humility and incremental progress. And of course people would all rather believe that what they are doing (their life) matters.

To clarify: I don’t think groups like BITSS are going to make the world some world that it is not**; that would be absurd. They are just trying to help us be a little better at our jobs. Like you say, some people mock, some people train students, some people write papers, some people make idiosyncratic references to books they are re-reading to try to argue that the futility of some ambition is not necessarily an argument against working towards it. This in service of those seeking to strike the illuminating fire from the rock that G-d has put there. Some of us, like you and me, do several of these things. Another drop in a bucket or another hole demarking the fenceline or once again commencing to roll the boulder up the hill… one must imagine jrc (¿and Gelman?) happy.

To further clarify: I just thought your quote and response sounded awfully fatalist relative the usual Gelman spiel about each doing their part to help the profession move forward. So I offered a fatalist defense of helping the profession move forward, so you could have it both ways. You know, if you wanted.

* https://books.google.com/books/about/Blood_Meridian.html?id=s-QzccStux4C

**N.B. on the way of the world:

The Judge in Blood Meridian: “That is the way it was and will be. That way and not some other way”

Marlo in The Wire: “You want it to be one way, but it’s the other way.”

Despite your Slate article and numerous blog posts and interviews, despite Dana Carney’s unambiguous statement, despite the Jesse Singal’s articles in New York Magazine and Tom Bartlett’s in The Chronicle of Higher Education, many people continue to think the “power pose effect” is still “scientifically proven.” Just today, Elite Daily ran a piece to that effect.

The problem lies partly in Cuddy’s equivocation and the silence from publications and individuals that have praised and promoted her work, such as the NYT and TED.

After being contacted by The Chronicle Review, TED officials added the following to the summary of her talk: “Some of the findings presented in this talk have been referenced in an ongoing debate among social scientists about robustness and reproducibility.” Referenced in an ongoing debate?

Yes to this: “We learn from our mistakes, but only if we recognize that they are mistakes. Debugging is a collaborative process.”

I think maybe they believe in their findings and they see statistics as a necessary evil, as a hoop they jump through to publish what they feel is correct. One can of course be cynical and say they believe because it helps their careers, but that gets into issues of self-deception and the extent to which one is more likely to deceive one’s self when that deception is beneficial to the self, monetarily, career-wise, relationship-wise, etc. (We’ve all done that; no one is perfect.)

An interesting sub-question might be evaluating the beneficial effect of crapola for the doer, imperfectly measured by comparing the career progress (publications, jobs, appearances, citations, whatever) of crapola producers versus producers of non-crapola publications (and those who do both!). You could then produce something, which examines and to some degree quantifies this incentive effect on academics. And then you could take that to crapola-land (which for me is all the stuff in Candyland that tastes like licorice) by claiming this shows the power of creative stupidity, that we as a race are primed to accept ideas that can be presented as true when they aren’t true in essentially every perspective but the one presented. Oh wait, that’s obviously true.

Jonathan:

I think you’re right in that first paragraph. Here’s what I wrote on this topic earlier:

If Fiske etc want to do purely qualitative studies, or test effects that work just about every time so that no formal statistics are required, that’s fine with me. But that’s not what they do. Instead they publish paper after paper in which they find patterns after collecting their data and then they justify it with p less than .05. So, for them, statistics is not just a hoop they must jump through; it’s actually the method that allows them to get their work taken seriously in the first place.

>So, for them, statistics is not just a hoop they must jump through; it’s actually the method that allows them to get their work taken seriously in the first place.

I suspect that the real root of this crisis is the “cult of science” – not the “real science” done in academia, but the toy science that is constantly presented in culture and media (both MSM and fringe) that claims scientists are high priests that happens to divine the truth about the world through rituals of a statistical nature. If a scientist makes a claim after doing the necessary rituals, then it *must* be true, no matter how bizarre it would be coming out from the words of random people on the Internet. There’s been comparisons of noise-mining to astrology in this comment thread, and I think that those comparisons are actually revealing here.

This comment is not meant to denounce the “cult of science”. Indeed, I’m a religious person, and I see the value of religion in our day-to-day life. In April, you pointed out that actual good could come out from this type of research (the placebo effect) (http://statmodeling.stat.columbia.edu/2016/04/10/should-i-be-upset-that-the-nyt-credulously-reviewed-a-book-promoting-iffy-science/), even though I think it does not outweigh the costs. But the point is…this cult is *not* science. Its value, such as it may, does not come from strict adherence to the scientific method but from inspiring confidence, telling brilliant stories, and making people think they know more about the world than they really do. Evaluating popular scientists as astrologers and priests would probably get us closer to understanding what’s really going on here, so long as we stress that religion isn’t *bad* at all.

I studied under Goldin-Meadow, and in my experience statistics there were a hoop that we had to jump through. That combined with the fact that most people working on the projects understood astonishingly little about the actual implications of the models meant that we would often change up analyses midstream. The stats just weren’t as important as the theory. For what it’s worth I didn’t see any specific malice.

AJ:

Yes, this makes sense, and there’s nothing wrong with a subject-matter researcher such as Goldin-Meadow not being a statistics expert. It makes complete sense for her to use statistics without having to understand a lot of it. I use computers every day but have very little understanding of semiconductors. OK, make that no understanding. In college we did learn a bit about how transistors work, but I’ve forgotten all of it.

I’d just hope that Goldin-Meadow, Bem, Bargh, Fiske, Kanazawa, Hauser, Baumeister, Wegman, the himmicanes team, the “statistically indistinguishable from 100%” crew, the ovulation and voting researchers, the ovulation and clothing group, etc etc etc. would know their limitations, instead of just trying to bluff their way through this. The whole thing makes me sad. At some point these people have to know that they’re faking it, right?

” At some point these people have to know that they’re faking it, right?”

I’m not convinced that the realization is necessarily inevitable. I suspect that the problem is in part the “us” vs “other” problem that so often crops up with human beings — if a group of people (e.g., researchers in a particular field) all believe something is OK, and they see people outside their group as “strange” or “not credible” or “eccentric” or “out-of-it” (ore even evil or ill-intended), then the “other” group doesn’t have any influence. To begin to have some influence, there somehow needs to be an increase in openness to listening. That might start with a few willing listeners in the “us” group, or some in the “other” group who figure out how to catch the ear of some of the “us” group.

Martha:

I guess, but at some point don’t they wonder when so many experts in quantitative methods are questioning their work. I mean, Bem, sure, I can see that he thinks ESP is marginalized so no one takes him seriously. But Bargh, Fiske, etc? Aren’t they somewhat aware that something’s wrong when on one side they have NPR, Ted talks, and the Harvard public relations department; and on the other side there are a ton of people such as Uri Simonsohn who actually know statistics? I could imagine that they think I’m biased, or they think E. J. Wagenmakers has a bug up his butt, or that Uri’s a hater, or whatever, . . . but all of us? What’s their story for that?

“all of us” is a group of “others” to Barg, Fiske, etc.. Their lack of awareness that’s something wrong is analogous to not hearing people who come from a different ethnic group. Yes, you would assume that academics would not do the latter. But can we assume that hearing what people from another ethnic group say would automatically generalize to hearing what people from this unnamed “other” group all say? I don’t see any a priori reason to assume the generalization.

I am hopeful that if enough of us keep harping at the problems, eventually the group of those accepting the criticisms will reach some “critical mass” so that others start listening out of shame if for no other reason.

It would be interesting to find out what persuaded people like Dana Carney to listen enough to take the criticisms seriously.

PS When I talk about “not hearing” I have in mind the phenomenon that many women in my generation have experienced (but I think has stopped or at least decreased considerably): where we bring up a suggestion in a meeting, and the discussion continues as if we have said nothing; then later a man brings up the same point, and people start discussing it.

Martha:

Regarding your third paragraph, Brian Nosek et al. in their “50 shades of gray” paper describe the process by which their perspective changed and the realized that they’d been looking at their study all wrong. For Carney, I can’t be sure but her change of heart could’ve come from seeing all the criticism of power pose research and realizing that maybe the critics had a point.

Isn’t there a selection bias? I’m sure there’s tons of reasonable people like Carney that do change their minds in the face of cogent criticism.

OTOH, you are way more likely to hear of the stubborn moron who sticks to his untenable position in the light of overwhelming evidence to the contrary.

P.S. I do have difficulty understanding their perspective. I had the same issue when engaging with the ovulation-and-clothing researchers. Those papers have lots of flaws from forking-paths andType M and Type S errors, but somehow what particularly bugged me was that they were using days 6-14 as peak fertility, when no medical sources use those dates. Peak fertility varies by person, the standard recommendation is days 10-17, and nobody would ever call day 6 or 7 a peak fertility day. So they were, very simply, not measuring what they thought they were measuring. But they didn’t seem to care about this at all. On some level, I understand this: they felt they had a discovery, and if their discovery was off by a few days, then fine, they still had a discovery, it was just not quite what they thought they’d discovered. But at the very least this invalidated their theorizing which was all about peak fertility. Anyway, the whole think was just weird to me, it was just so different from how I think.

Regarding your other point about the “us” and “other” group: I think it’s fine to communicate with that “other” group and, believe it or not, I really do try. But I actually think that group (Bargh, Fiske, Baumeister, Bem, etc.) are not so numerous and, outside a certain APS/NPR/Ted inner circle, not so influential. So I’m happy to be communicating with what I see as the vast majority of researchers in social science who are not part of this debate and are trying to learn how to do better.

“Regarding your other point about the “us” and “other” group: I think it’s fine to communicate with that “other” group and, believe it or not, I really do try.”

I guess I didn’t make it clear that in what I wrote I was thinking of Bargh, Fiske, etc. as the “us” group and you, me, etc. as the group of “other”.

“So they were, very simply, not measuring what they thought they were measuring.”

My guess would be that they saw what they were trying to measure as a much vaguer concept than you or I would think of measuring — in other words, they may have been measuring what, in their thinking, was what they were trying to measure, but it wasn’t what you thought they should be measuring (or what you thought they thought they were measuring.).

Martha:

Could be, but the phrase “peak fertility” is in the title and abstract of their paper. So if they thought they were measuring something vaguer, they certainly didn’t convey this to the readers of their article.

Andrew:

My error — I now see that you were referring to the ovulation etc. study, but for some reason it got confused in my mind with the power studies.

My take on it is that there is an actual belief in that “every finding is an accurate description” statement. I don’t think anyone would endorse the errors you mentioned in the post, but I don’t think those type of things occur to some people, which is a separate problem. In my case in particular, I think that there was some understanding of the limitations of our statistical approaches, but the importance of those limitations was definitely not understood. It wasn’t a matter of bluffing: if we found something with a small enough p-value, then we found a ‘real effect’ or something that was ‘true for those data’. It’s not that we weren’t interested in statistics and would rather do purely observational studies, it’s that this *was* us ‘doing statistics’. We knew nothing about about semiconductors, and we were trying to build a motherboard, but it’s okay because the schematic told us where to put them so it wasn’t important to understand them. Understanding the stats/p-values and the schematic/semiconductors was someone else’s job way beforehand, and any criticism after our analysis was a criticism of the technique/map and not the application, because we were just following directions.

As Realist Writer said just above, there was a feeling that “If a scientist makes a claim after doing the necessary rituals, then it *must* be true”. So the stats were one argument for (and functionally never against) a theory, but the theory itself was more important.

“At some point these people have to know that they’re faking it, right?”

This reminds me of a fascinating essay from Michael Inzlicht, on possibly having this realization: “As someone who has been doing research for nearly twenty years, I now can’t help but wonder if the topics I chose to study are in fact real and robust. Have I been chasing puffs of smoke for all these years?”

“Psychology is not just a club of academics, and “psychological science” is not just the name of their treehouse. ” Nailed it!

On reflection, I am personally embarrassed to acknowledge that this sentence probably does reflect a lot of how psychology is transacted amongst those in academia. Statistical errors noted by ‘outsiders’ are a direct threat to status, funding and a deeper need to be an ‘authority’ and holder of the shaman’s runes. I know; I’ve done it and paid for it. There is an unwritten rule, even in my small country, that you don’t ‘criticise’ colleagues — even if criticism is actually correction of errors. The club takes precedence, for some. It allows an abuse of power if left unchecked and un-moderated. The roles and titles (of Psychologist) convey status to the public, who deserve better. This and other fora help correct the lean in the treehouse.

PS. Loved this recent pre-pub! https://osf.io/preprints/psyarxiv/uk6ju

I’ve been torn for awhile about leaving APS. Being a member and writing letters doesn’t affect things at all… as seen in their behaviour. And to think at one time, a long time ago, the APS was developed because the APA was scene as unscientific in their approach. The APS was supposed to be where the real science was.

But they are sort of a two headed monster. The editors of Psychological Science have one after another made efforts to try to solve the problem of unreplicable findings in their journal. For example, current policies requiring explanations for sample sizes and allowing methods sections to be unlimited in length are nice positive steps forward. And their journal Perspectives on Psychological Science is the premiere outlet for much of the thinking in Psychology that is sympathetic with the views evident in this blog. Frances, Simmons, etc. all publish there. If you only read that journal you’d think that psychology has a very healthy attitude about statistics and methods and values them very much.

And then they go and put on this idiotic kind of symposium…

what to do…

+1

Please don’t leave APS over these events.

APS is indeed a multi-headed beast — but that can be both good and bad. The President has lots of discretion over the speakers at the well-advertised “Presidential Symposium” and over who writes the “Presidential Column” in the Observer but the Journal Editors have lots of discretion about what goes into the journals. Eric Eich and I got a lot of flak from the APS Board when we wanted badges (for PS) and Registered Replication Reports (for PPS). And I got a TON of flak for publishing all the method stuff that I did at PPS — but we could do it. Plus, at the conference there will be several other (less prominently advertised) symposia addressing progress and problems in the Open Science movement.

So, don’t leave APS. We need progressive people to run for the Board (Simine Vazire is on it now) and to serve on the Committees that select Editors and Presidential candidates, and on the Program Committee and, well, just members who will vote for candidates who want to move psychological science in the right direction.

Thanks! Bobbie aka Barbara A. Spellman (the former EIC of Perspectives)

Morey and Wagenmakers are speakers at the APS event it seems. I guess they are going to come with their statistical guided missiles locked and loaded.

It’s worse than you think. If you knew all the business Witt got up to …

Anon:

Hey, if you have something specific to point to, that’s fine. But innuendo isn’t helpful.

Andrew, you should go after the APA as well, because they do not “endorse routine sharing of data”. The APA has actually come out *against* open data!

Here is the post on this.

Aargh! They just don’t get it.

You missed some juicy bits Andrew in the link you provided (emphasis mine):

“The second advantage [of field studies as opposed to lab experiments] is less obvious: It is difficult to control all, or even many, of the variables in a field study. Why is this lack of control a good thing? If a phenomenon is discoverable under these messy conditions, it is likely to be a robust one that is worthy of explanation…. Having a large number of naturalistic observations on a small number of participants can lead to robustness.”

[Her evidence comes from Piaget’s observations of his own three children.]

And then these comments:

“Happily, this means that in areas where it is difficult to repeat a study, exact replication may not be essential in ensuring a phenomenon’s robustness.”

“What we don’t want to do is require that the procedures used to ensure robustness and generalizability in experimental studies (e.g., preregistration, multiple-group replications of a single study) be applied to all types of psychological studies, and then devalue or marginalize the studies for which the preregistration procedures don’t fit.”

So what’s an example of a study that is difficult to repeat? The Carney et al study? Red-means-I-want-sex? The himmicanes stuff?

What she’s really asking for is an exemption from having to do science in certain areas of psychology. I guess their jobs depend on it. Linguists reacted similarly when faced with the increasing use of empirical methods to study syntax; they started to dig in and defend the intuition-based methodology that Chomsky essentially invented (it’s a good method in itself for initial theory development, it just doesn’t work in the theoretically crucial cases).

Seems like this is a counter-attack against the likes of Andrew.

Norbert Hornstein argues (or asserts) syntax doesn’t need statistics, linking to the recent post here about that fMRI clusterfun (here).

I don’t really have a horse in that race , but I’d be curious to hear your thoughts on it.

I agree with Norbert that for basic theory development in syntax one doesn’t need statistics. Just look at how far the syntacticians got with theory development without any experimental study.

It may even be that if you need statistics to answer a question in syntax, you may be asking the wrong question. This could even be true for psych*. Peter Culicover, another famous syntactician, was one of my professors at Ohio State when I was a student there, and he used to say that if there is some doubt about a data point (by which linguists mean about the facts regarding some phenomenon), let the theory decide.

There is also the uncomfortable fact that I don’t know a single syntactician who does empirical work who uses statistics as She was intended to be used. It’s basically a low grade version of the fMRI clusterf**k. This may well be another argument to just abandon statistics and go back to the intuition-based method; at least they can do the latter competently. Why mess up the field with badly done statistics? I don’t mean this ironically; I think I have come full circle to the view now that if you can’t do the math, just don’t do it. Find an alternative route.

I quit doing syntax after I ended up in lectures at OSU where the professors got to argue about which sentence was grammatical and which one not, and whoever had more seniority won. I also attended lectures by J-P Pollock (in Hyderabad, maybe 1994), in which he vigorously defended attacks on his dearly functional projection story from some other French speaker by invoking his own judgements. The last straw for me was when Paul Postal came to Ohio State to tell us his theory of negative polarity licensing, and a fellow student and I showed him counter-examples from corpora to his theory. His response was that in order to falsify his account one has to find a sentence that he would find acceptable or unacceptable that was inconsistent with his story, a kind of extreme idiolectal theory development. The extreme subjectivity was too much for me then, but I’m older and wiser now, and I realize that the illusion of objectivity that statistics gives is even worse (in the wrong hands, which it almost always is).

The more problem in syntax is that the empirically interesting cases needed subtle judgements, and nobody knew what the facts were. If you were from MIT, you could assert that such and such sentence is true and it would have to be true. For example, an MIT graduate, Anoop Mahajan, asserted some fact about weak crossover effects in Hindi that formed the basis of his dissertation. But only he “got” that grammaticality judgement, I never found another Hindi speaker who did (OK, some of his friends did). I guess we could do theory development by consulting a designated canonical and authoritative speaker (Postal, Mahajan, etc.).

For such cases, one does need quality statistical detective work; but I don’t think that syntacticians have that kind of training. And it’s not clear if they even want to make statements that are generalizable to a population of speakers.

I meant Jean-Yves Pollock of course, not JP Pollock.

I forgot to say that I believe that if syntacticians are willing to put in the work to learn the statistical theory, then of course by all means they should go for serious empirical work. Just as they should feel free to theorize about syntax, but only after understanding the syntactic theory needed. I wouldn’t want a philosopher to start doing syntax without knowing anything about or caring about the underlying theory.

Thanks for the thoughtful reply. Sometimes I feel lucky that I had such a terrible intro syntax class that I got turned off to that whole subfield.

That’s too bad because syntax is a very cool area.

“Why mess up the field with badly done statistics? I don’t mean this ironically; I think I have come full circle to the view now that if you can’t do the math, just don’t do it.”

“illusion of objectivity that statistics gives is even worse (in the wrong hands, which it almost always is).”

Thoughtful comments.

I am hoping the (technical) math barriers will decrease with stuff like Stan.

Noah:

At the link, Hornstein writes, “Call this Rutherford’s dictum: ‘If your experiment needs statistics, you ought to have done a better experiment.'”

I don’t know enough about linguistics to comment on the specifics; it may well be that Hornstein’s subfield does not require inferential statistics. Galileo, Pasteur, Piaget, Curie, etc., did excellent experimental science without the use of formal statistical methods. Even more recently, did Watson, Crick, Pauling, etc., use formal statistics? I don’t know, but quite possibly not. Kahneman and Tversky did use formal statistics but their key findings were strong enough that such methods were not needed. And then when they did start using formal statistics to decide things, they made mistakes (as in Tversky’s denial of the hot hand and Kahneman’s endorsement of embodied cognition).

It depends on what you want to do. Norbert is talking about theorizing about the abstract structure of language. If you are building Natural language systems that actually do something useful, good luck ignoring stats. But Norbert doesn’t about practical applications in this context.

I did a quick search after reading her piece to see if Piaget’s work had been replicated. I quickly came across what seems to be a whole set of direct replication studies performed in the 60’s !

https://www.ncbi.nlm.nih.gov/pubmed/13889875

I posted this anonymously as a comment below the piece to try and make clear that 1) the Piaget studies that she referred to are not exempt of replication attempts, and 2) deciding whether a phenomenon is robust may be influenced more by replication attempts than by “having a large number of naturalistic observations on a small number of participants”.

The comment got deleted after 2 days or so…..

So much for the APS fostering open debate.

Anon:

You could try reposting and seeing if it gets deleted again!

I understood this paragraph (“The second advantage of field studies…”) differently. I interpret this as if a conditional effect is repeatedly found under many kinds of samples and many combinations of measured and unmeasured confounders (what one gets in the field), then the effect is real. This will only happen if the effect is large and interaction effects (variation in the effect across conditions) are small. An example might be the effect of smoking on lung cancer. The effect is big enough and the interactions small enough that the effect is repeatedly found in observational designs with different samples.

Even with the smoking stuff it looks like they had to fudge it (at least the mortality chapter, I haven’t checked the cancer one yet). Check out figure one on page 88 in the original 1964 surgeon general’s report: https://profiles.nlm.nih.gov/ps/access/NNBBMQ.pdf

Both smokers and non-smokers have the same number of datapoints, but the smoker data starts 5 years earlier… So there is an seemingly extraneous datapoint for non-smokers centered on age 75 or so. Very strange way to arrange the data, and no explanation is offered. Can anyone find the reference 14 that figure is supposed to be based on? “Ipsen, J., Pfaelzer, A. Special report to the Surgeon General’s Advisory Committee on Smoking and Health.”

I suspect that one of those curves should be shifted so that the age groups line up, either way something like that should not have made it through peer review without explanation.

The “Statistics and Methodology Teaching Playlist” on the APS site is not so bad, though…: http://www.psychologicalscience.org/members/teaching-psychological-science/teaching-playlist-statistics-and-methodology

> For it to have been an accurate description, there would’ve had to be some measure of power on the participants.

Some enterprising soul will turn that fact into a grant proposal. Hmmm… perhaps an fMRI-based power measurement system… or perhaps not – https://www.wired.com/2009/09/fmrisalmon/

It would also help if they gave a definition of “power” — maybe they just take it to be whatever their measure measures??

Just a quick reminder that on balance, psychology has done a lot of good on these issues. Wagenmakers is a psychologist, after all. So is Nosek. There’s a huge problem, but it’s a problem with *some* psychologists, or with most psychologists *some* of the time. I don’t want to understate the problem, or the silliness of some of these statements, or the damage that is done. Just to remember that psychology is a pretty big tent, and the field as a whole is producing some of the solutions as well as many of the problems.

Here is all you need to know: The title of the symposium is “how our bodies shape our minds”.

I contend (and argue carefully — e.g., Psychology of Consciousness, 2014, in press) that psychology has NO IDEA (not even a vague one) what a MIND consists in.

Thus, the title really says “how our bodies (which we do have some understanding of) exerts a causal influence on an aspect of reality (real or imagined?) about which we have no principled understanding (of its boundaries, properties, causal potencies, mechanisms, etc). And what are the units of measurement of mind and its constituents? How does one assign numeric values to a stipulated abstraction possessed of epiphenomenal status? By Likert scale conjoined with a health pre-reflective dose of pure stipulation!

This is contemporary psychology! — busily preparing a path for the re-emergence of behaviorism (and all its warts).

Pathetic.